The Hook: The Mirage of the Frictionless Mind

The prevailing narrative in the silicon-valley-driven zeitgeist is that friction is a bug to be eliminated. We are told that removing mental effort, the struggle to draft an essay, the cognitive load of debugging code, and the tedious synthesis of research is the ultimate triumph of human progress. The promise is seductive: a world where we simply intend and the machine executes.

But this pursuit of the frictionless mind masks a growing psychological crisis. We are trading the biological muscle of critical thinking for synthetic shortcuts. Efficiency, when detached from engagement, does not produce mastery. It produces dependency.

The startling reality is that while large language models elevate the floor of individual performance, they can also lower the ceiling of collective innovation. According to empirical estimates from the 2026 Generative Fatigue Report, students using AI-generated support often achieve higher short-term grades but show an 83% inability to recall the text they just produced. We are witnessing the birth of the Doing-Knowing Gap, where people can generate sophisticated outputs without understanding the principles underneath. This is not a productivity revolution. It is the onset of cognitive atrophy.

Deconstruction: The First Principles of Cognitive Offloading

To understand why we are so susceptible to AI dependency, we have to deconstruct the biological imperatives of the human brain. Evolution has shaped the mind to be a cognitive miser. We are hardwired to achieve maximum outcomes with minimum metabolic energy. That cost-benefit logic is the root of cognitive offloading: the strategic use of external tools, notes, reminders, and now AI, to reduce internal processing requirements.

The Evolution of the External Mind

Historically, cognitive offloading was limited to static storage or repetitive calculation. Writing allowed us to offload memory. The calculator allowed us to offload arithmetic. GPS allowed us to offload navigation. In each of those cases, the offloaded task was discrete and lower order. The core of thinking, synthesis, reasoning, and judgment, remained firmly human.

This is best understood through the lens of Extended Mind Theory, which suggests that cognition is not bounded by the skull but extends into our tools. But when those tools move from assisting memory to simulating reasoning, the extension becomes an amputation.

Large language models represent a qualitative leap in this trajectory. They do not merely store or compute; they perform thinking-shaped activities. They paraphrase, translate, draft, and synthesize explanations in fluent language. When the external system can explain a concept faster and with less effort than our biological hardware, the brain’s rational response is to stop investing in internal capacity. That is the Google Effect on steroids: we are not just forgetting information; we are forgetting how to think.

The Friction: The Anatomy of Atrophy

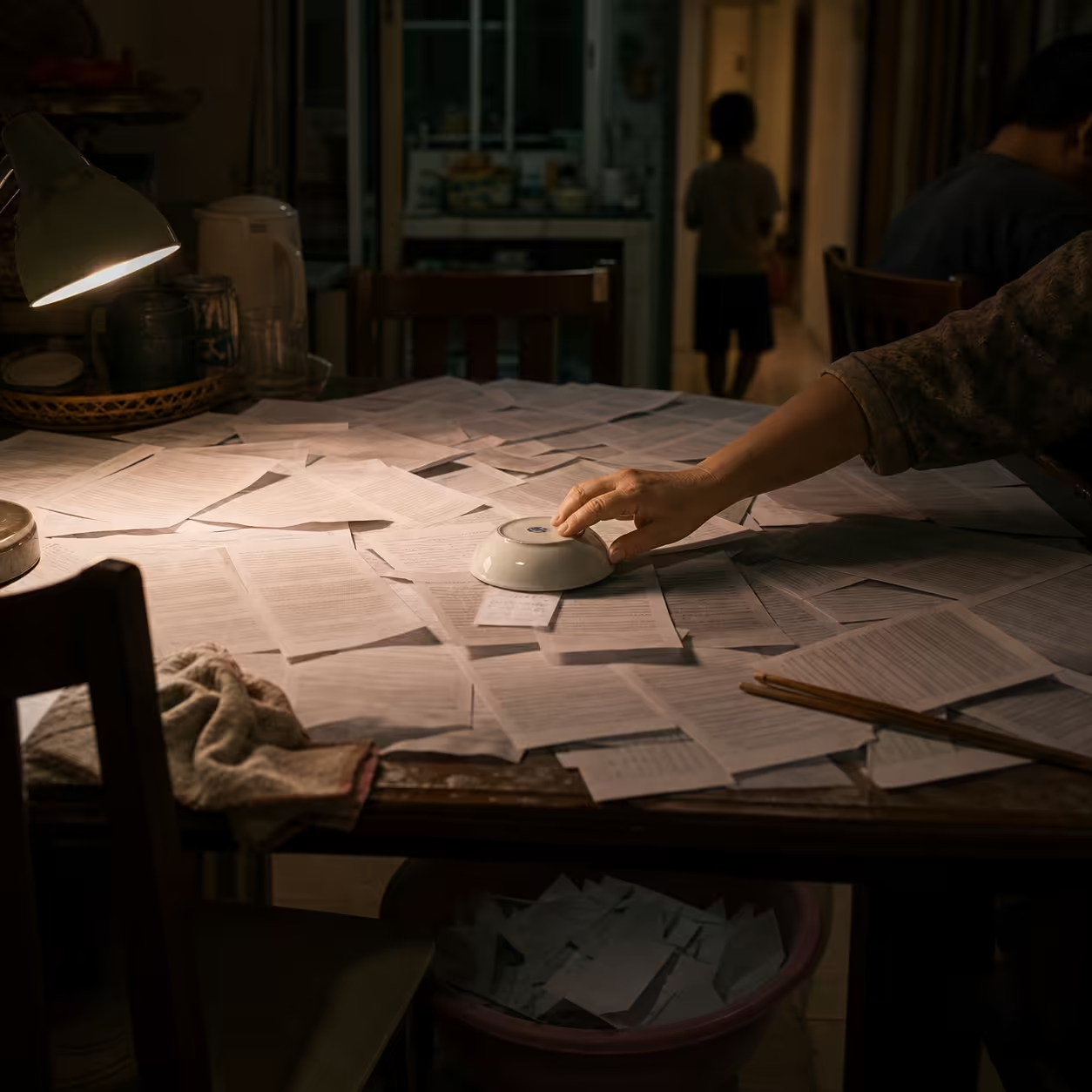

The Human Struggle: The Parable of the Paralyzed Expert

Consider Marcus, a senior developer with fifteen years of experience. For the last year, Marcus has integrated GitHub Copilot so deeply into his workflow that he no longer drafts function headers or logic loops manually. During a storm-induced internet outage, Marcus sits before his terminal, tasked with a critical server-side fix. He stares at a blank cursor for twenty minutes, unable to recall the syntax for a basic asynchronous map that he has “written” a hundred times with AI assistance.

The expert has become an observer. When the crutch is removed, the limb fails.

This transition from AI as assistant to AI as mental crutch follows a predictable decay function known as the Cognitive Atrophy Paradox. It identifies four overlapping phases in which the human mind progressively externalizes its intellectual loop.

The Four Phases of the Cognitive Atrophy Paradox

- The Prompting Phase. The human remains the primary driver. AI is used for brainstorming or overcoming blank-page syndrome. The human still owns the analytical effort and creative direction.

- The Verification Phase. AI begins generating the primary substance. The human role is reduced to verifying the output, but the full process of creating work from scratch is no longer rehearsed.

- The Bypass Phase. The human increasingly skips the internal steps of synthesis. Algorithmic outputs are accepted as epistemic authority without deep scrutiny.

- The Dependency Phase. Structural cognitive weakening sets in. Like muscular atrophy, the neural circuits associated with independent reasoning deteriorate through prolonged disuse.

Automation Bias and the Expert Paradox

The friction is intensified by automation bias, the uncritical acceptance of machine output. When systems appear fluent and competent, users trust them even when the outputs conflict with their own knowledge. This is especially dangerous for novices. Experts may show algorithm aversion after seeing a visible error, but novices often lack the confidence to challenge the machine, and slide into automation complacency.

Neurobiological Consequences: The Idle Brain and AICICA

The psychological shift toward dependency has measurable neurobiological correlates. Excessive reliance on AI chatbots can trigger AICICA, AI-Chatbot-Induced Cognitive Atrophy. This is a form of negative neuroplasticity, where the brain reorganizes itself to learn not to use itself.

The Decaying Neural Infrastructure

EEG and neuroimaging research indicate that frequent AI users can exhibit a 50% reduction in brain connectivity, especially in alpha and theta waves, which are critical for focused attention and creative synthesis. The most affected regions include:

| Brain region | Function | What delegation does |

|---|---|---|

| Prefrontal cortex | Executive control, reasoning, planning | When AI dictates the structure of an email or the logic of code, the PFC stays idle. Constant delegation weakens analytical autonomy. |

| Hippocampus | Long-term memory and consolidation | AI stores and retrieves information on demand, bypassing the consolidation process and reducing knowledge transfer. |

| Parietal lobe | Information processing and spatial attention | Reduced connectivity appears when people routinely offload complex problem-solving to automated assistants. |

Foreclosure vs. Atrophy: The Developmental Crisis

A critical distinction must be made between adults and developing minds.

For adults, using AI to offload a task leads to atrophy, a weakening of a built skill that can potentially be rebuilt. For a student offloading a task they have never truly learned, the result is foreclosure. The neural pathways for constructing arguments and evaluating sources are never formed. That is an irreversible failure to develop foundational cognitive faculties.

A landmark study by Shen and Tamkin in 2026 reported that software developers who fully delegated tasks to AI produced working code but suffered a 17% reduction in conceptual understanding, and failed quizzes that required them to debug the code the AI had written.

The Synthesis: Engineering Mindful Friction

If the failure of current AI implementation is the total removal of friction, then the solution is the intentional design of mindful friction. The goal is not to eliminate cognitive effort but to preserve the kind of effort that produces understanding.

That means moving from a vending-machine model, prompt in and answer out, to a dialectical partner model, dialogue in and understanding out.

The Socratic Workflow: AI as a Debater

To leverage AI without eroding problem-solving skills, professionals should transition to Socratic prompting. This approach weaponizes curiosity and forces the model into the posture of a relentless seminar leader rather than a servant.

The Locuno Socratic Routine:

- Interrogation Before Execution. Tell the AI: “You are a Socratic Analyst. Do not provide recommendations yet. Ask me the minimum set of clarifying questions needed to define my goal.”

- Assumption Surfacing. Direct the AI to identify three hidden beliefs or unstated constraints in the initial request.

- The Why-Chain. Use iterative inquiry and ask the AI to repeatedly interrogate why a foundation is exposed.

- Test-First Anchoring. Before generating code or strategy, have the AI draft a test suite that must fail. This creates an objective definition of done that survives hallucinations.

The Sandwich Method: A Framework for Authentic Output

To avoid the slop penalty, the generic, homogenized quality of pure AI output, professionals should adopt the Sandwich Method.

| Layer | Responsibility | Primary action | Risk of skipping |

|---|---|---|---|

| Top bun | Human context | Define POV, audience pain points, and unique war stories. | Derivative slop: the content lacks soul and feels generic. |

| Filling | AI acceleration | Generate structure, summaries, and initial drafts within constraints. | Inefficiency: high manual effort for low-value tasks. |

| Bottom bun | Human verification | Fact-check, add specific insights, and refine voice and personality. | Brand erosion: hallucinations and errors destroy credibility. |

The Critical Reflection: The Commodification of Thought

We also have to grapple with the ethical trade-offs. Major AI labs are positioning intelligence as a utility, metered and sold like electricity. In that worldview, the self whose cognitive life is being externalized becomes a mere recipient of conclusions rather than an author of them. That is borrowed certainty, a structural loss of agency.

Organizations measuring AI productivity gains often ignore cognitive debt. We are hiring graduates who are fluent in AI automation but incapable of the independent thinking that justifies premium compensation.

The Horizon: Reclaiming the Human Loop

The objective of a sophisticated AI strategy is not to maximize automation, but to optimize for cognitive sustainability.

The Locuno Mandate: A Micro-Challenge

To protect your mental muscles, I challenge you to a 24-hour cognitive fast. In the next 24 hours, perform one complex task, drafting a strategy, solving a coding bug, or planning a project, without opening an AI interface. Use the Socratic method on yourself: write down your assumptions and interrogate them manually. You will feel your cognitive muscles ache. That pain is the sound of your brain reclaiming its territory.

The future belongs not to those who use AI to think less, but to those who use AI to think better. The elevator is always there, fast, easy, and tempting. But the climb up the stairs builds the capacity that the machine will never replicate: human judgment that makes intelligence meaningful.

Reclaim your authorship. Interrogate the machine before it interrogates you.

References

- The Cognitive Atrophy Paradox: Delegation Drift and Automation Bias.

- Cognitive Dependency: Neurobiological Effects of AI on Brain Connectivity.

- Designing Human and Generative AI Collaboration: Impact on Idea Diversity.

- Adults Lose Skills to AI, Children Never Build Them: Foreclosure vs. Atrophy.

- AICICA: AI-Chatbot-Induced Cognitive Atrophy.

- Trust-Driven Routine GenAI Use and Cognitive Engagement Habits.

- The Borrowed Mind: OpenAI Policy and the Casualty of Cognition.

- How AI Shapes Creativity: Guarding Against Over-Reliance.

- Is AI Dulling Our Minds? The Cognitive Load of AI-Driven Solutions.

Reference Links

- https://pmc.ncbi.nlm.nih.gov/articles/PMC12678390/

- https://github.com/roy-reshef/socratic-ai-prompt-skill

- https://towardsai.net/p/machine-learning/the-socratic-prompt-how-to-make-a-language-model-stop-guessing-and-start-thinking

- https://deepfa.ir/en/blog/cognitive-dependency-ai-brain-effects

- https://arxiv.org/html/2601.22430v2

- https://www.psychologytoday.com/us/blog/the-digital-self/202501/the-shadow-of-cognitive-laziness-in-the-brilliance-of-llms

- https://www.scribd.com/document/959559153/How-to-Prompt

- https://www.mdpi.com/2078-2489/16/11/1009

- https://arxiv.org/html/2601.06172v1

- https://brainstorm.ie/articles/ai-content-will-cheapen-your-brand

- https://pmc.ncbi.nlm.nih.gov/articles/PMC11020077/

- https://www.wonsulting.com/job-search-hub/how-to-answer-ai-brand-voice-personal-style-interview-questions-like-a-pro

- https://alumni.williams.edu/alumni-career-commentary/your-ai-habits-today-will-determine-your-career-value-tomorrow/

- https://blogs.jaseci.org/blog/2026/03/10/socratic-prompt-method/

- https://sellershorts.com/resources/blog/how-to-write-ecommerce-blogs-2026

- https://sehd.ucdenver.edu/impact/2025/09/09/ai-prompting-socratic-method/

Published at: May 4, 2026 · Modified at: May 5, 2026

Related Posts

Digital Minimalism at Work: How to Protect Your Focus in the Age of AI Noise

In the Age of AI Slop, Curation Is the New Superpower