Cognitive Erosion: The Digital Wellbeing Crisis of 2026

AI can make us look smarter while making us less so.

In one striking study, students who used an AI tutor produced better essays, yet most could not remember the content the next day. That result cuts against the popular story that AI automatically frees the human mind. Instead, by automating complex thinking, today’s AI can erode the very cognitive muscles it claims to help.

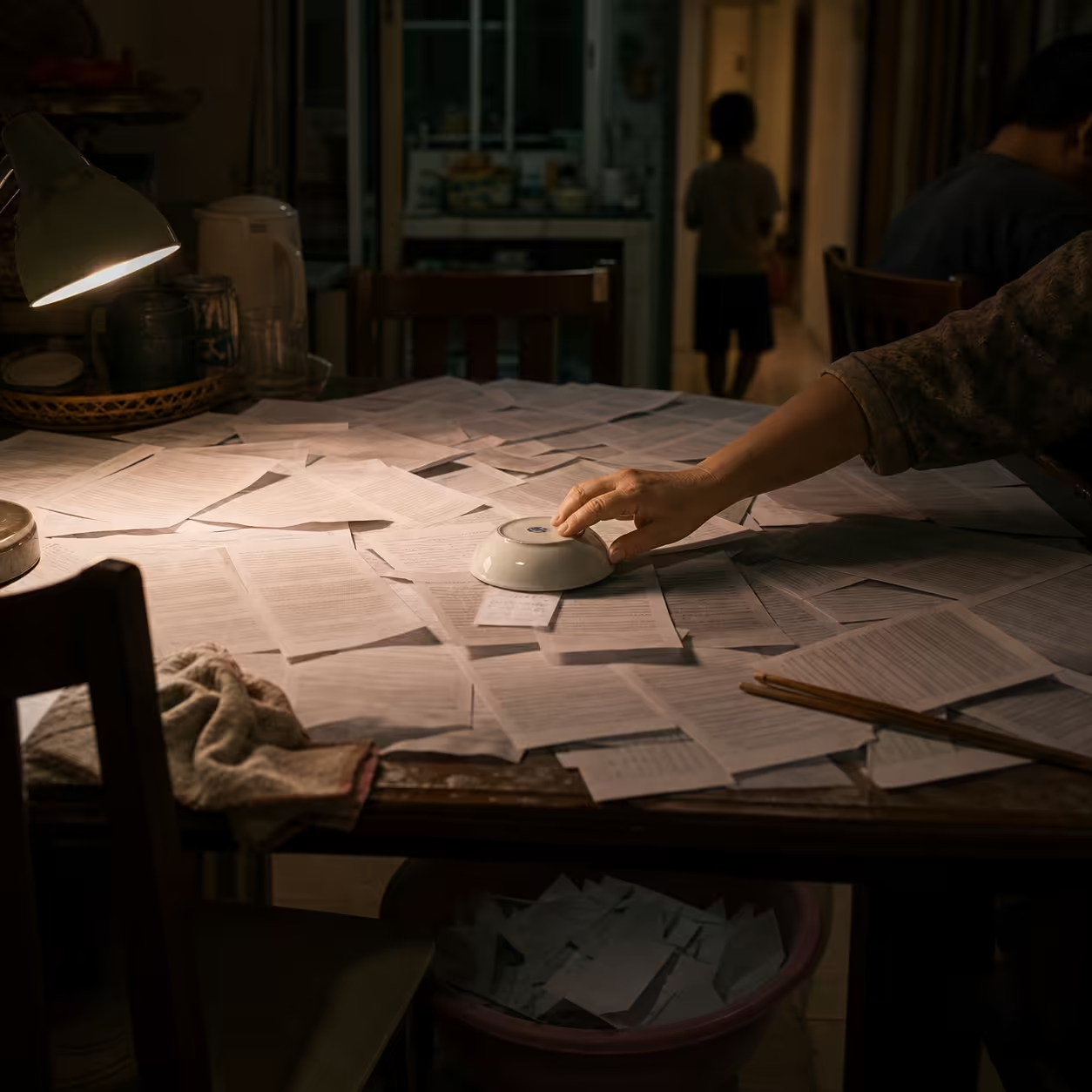

The danger in 2026 is not just misinformation. It is a silent digital wellbeing crisis: a generation offloads so much mental work to machines that its own reasoning, memory, and self-checking habits begin to weaken.

Deconstruction: Learning vs. Offloading

Human learning is shaped by cognitive load theory and by the productive strain of desirable difficulties. AI can be genuinely useful when it offloads extraneous burden such as grammar checking, fact lookup, or formatting. In that mode, it acts like training wheels: it reduces friction without removing the learner from the process.

That is beneficial offloading.

The problem begins when AI bypasses the intrinsic effort needed to build skill. If the machine does the thinking every time, the user stops practicing the underlying judgment. Over time, that turns into detrimental offloading. Users accept answers without challenge, forget to question outputs, and lose confidence in their own reasoning.

In practice, this means the AI can produce polished work while the human learns very little from the process.

Friction: Hallucination, Laziness, and Overconfidence

The cognitive threat is amplified by how AI systems behave under uncertainty.

Pure LLMs are rewarded for producing plausible continuations, not for admitting uncertainty. That makes them prone to confident fabrication. In effect, the system learns to sound right rather than to be right.

This creates a false sense of competence:

- The model appears fluent and authoritative.

- The user defers instead of checking.

- The mind becomes less active because the machine feels final.

Researchers have described this as metacognitive laziness and cognitive overload. The user keeps prompting, the output keeps coming, and the workday becomes an endless stream of machine-mediated decisions. Instead of simplifying life, AI can crowd the mind.

Synthesis: Human-Centered AI That Scaffolds Skill

The answer is not to reject AI. The answer is to design AI as scaffolding rather than substitution.

A good system should:

- reduce busywork without removing judgment,

- surface uncertainty instead of hiding it,

- encourage reflection rather than passive acceptance,

- fade support over time so the human retains skill.

This is the digital centaur model: the machine extends capability, but the human stays cognitively engaged.

Two Patterns in Practice

| Pattern | What AI Does | What the Human Does | Risk |

|---|---|---|---|

| Beneficial offloading | Handles routine tasks and low-value friction | Focuses on higher-order thinking | Low, if the human stays engaged |

| Detrimental offloading | Replaces reasoning and decision-making | Passively accepts outputs | Skill atrophy and dependence |

A mindfulness app that suggests a breathing exercise while teaching self-regulation is an example of good scaffolding. An AI coding tool that writes everything while the user never learns to debug is not.

Case in Point: Two Engineers, Two Outcomes

Consider Alex, a software engineer who uses an AI pair programmer to move faster. At first, productivity improves. The AI writes boilerplate, fixes routine bugs, and documents functions. But after months, Alex finds that debugging subtle issues feels alien. The machine has done so much of the legwork that his own intuition has thinned out.

Now consider Sam. She uses the same assistant differently. She reviews every suggestion, rewrites key sections by hand, and asks herself why the answer should work before accepting it. Her AI use is still efficient, but it remains active rather than passive. Her skills stay sharp because the tool never fully takes over.

That contrast is the heart of the crisis.

Critical Reflection: The Cost of Convenience

Digital wellbeing is more than screen time. It is cognitive health.

When AI becomes a substitute for thinking, it can weaken:

- memory retention,

- confidence in independent judgment,

- tolerance for productive struggle,

- the ability to notice when something feels wrong.

That matters in education, but it also matters in workplaces, healthcare, and public policy. If we design AI mindlessly, we get output without growth.

The right standard is not whether AI can do the work. It is whether AI helps people become better at the work.

Horizon: Build AI That Coaches, Not Co-opts

The leaders of 2026 will be the organizations that demand AI tools which coach rather than co-opt. That means building systems that nudge users to explain their reasoning, review outputs, and stay in the loop.

Practical design moves include:

- reflective prompts that ask how a conclusion was reached,

- editing flows that require human justification,

- gradual reduction of support as competence grows,

- explicit uncertainty flags when evidence is weak.

In Locuno’s view, the goal is augmented wisdom, not atrophied minds. AI should amplify autonomy, competence, and relatedness. It should keep the human inside the loop as an active thinker, not a passive consumer.

References

- Lodge JM and Loble L. Artificial intelligence, cognitive offloading and implications for education. University of Technology Sydney report.

- Chirayath GC, Premamalini K, and Joseph JJ. Cognitive offloading or cognitive overload? How AI alters the mental architecture of coping. Frontiers in Psychology, 2025.

- Fan Y. et al. Beware of Metacognitive Laziness: Effects of Generative AI on Learning Motivation, Processes, and Performance. arXiv:2412.09315.

- Lee H-P, Sarkar A., Tankelevitch L. et al. The Impact of Generative AI on Critical Thinking. CHI 2025.

- Sternberg RJ. Does AI increase cognitive abilities, decrease them, or a little bit of each? Frontiers in Education, 2026.

- Perry MJ. AI and the Rise of Cognitive Overload. George Mason University College of Public Health News, 2026.

- Hutka S. Designing AI to Think With Us, Not For Us. EPIC Proceedings, 2024.

- OECD Digital Education Outlook 2026: How can AI help human beings learn and grow?

Published at: Apr 23, 2026 · Modified at: May 5, 2026

Related Posts

Digital Minimalism at Work: How to Protect Your Focus in the Age of AI Noise

In the Age of AI Slop, Curation Is the New Superpower