The most significant competitive advantage in the computational era is not the capacity to leverage artificial intelligence, but the discipline to silence it. A startling statistic from recent neurological research at the Massachusetts Institute of Technology (MIT) reveals that individuals who initiate creative tasks with generative AI assistance fail to recall or quote their own work in 78% of cases immediately following completion.1 This phenomenon, characterized as “cognitive debt,” suggests that the frictionless production of content through Large Language Models (LLMs) effectively bypasses the brain’s ability to encode, retrieve, and synthesize information. While 74% of developers report increased productivity through prompt-based workflows, a simultaneous “tragedy of the commons” is unfolding within professional ecosystems.2 The proliferation of “AI Slop”—low-quality, superficially competent content produced at scale—is systematically eroding the social contract of collaborative development and the integrity of shared knowledge resources.3 The hidden truth of high performance is that the most effective practitioners are not those who use AI the most, but those who strategically disconnect to preserve the human-in-the-loop oversight required for production-grade rigor.

Deconstructing the Hybrid Intelligence Paradigm: First Principles

To architect a sophisticated Hybrid Intelligence (H-AI) workflow, one must first deconstruct the divergent epistemic profiles of human and machine. Hybrid intelligence is the combination of human cognitive abilities—specifically intuition, creativity, and ethical judgment—with the computational speed and analytical rigor of AI technologies.4 This symbiosis is intended to amplify human strengths while compensating for inherent biological weaknesses, such as limited data processing capacity and susceptibility to fatigue.5 However, the current implementation of this synergy often fails because it conflates linguistic fluency with conceptual understanding.

The Stochastic Walk vs. The Epistemic Agent

At the most fundamental level, current AI systems, specifically generative transformers, are stochastic pattern-completion systems.6 They function as walks on high-dimensional graphs of linguistic transitions, where nodes represent tokens and edges represent learned transition probabilities. The system does not “know” or “believe”; it transits through a probability landscape, predicting the most likely next token based on a prompt.7 This architecture, while capable of mimicking reasoning, operates on the principle of reducing uncertainty in prediction rather than achieving truth.8

Human intelligence, by contrast, is rooted in an epistemic pipeline that integrates multimodal, embodied, and social perception.9 Humans are epistemic agents who form models of the world, navigate causal relationships, and operate within a framework of values and accountability. The alignment between human and machine outputs is often a surface-level illusion that conceals a deep structural mismatch in how judgments are produced.6

These mismatches can be articulated as seven epistemological fault lines where human and artificial intelligence diverge.

| Epistemic Fault Line | Human Mechanism | AI (LLM) Mechanism |

|---|---|---|

| Grounding | Embodied, social, and multimodal perception | Statistical reconstruction from text alone |

| Parsing | Constructing meaning-rich, situated representations | Mechanical segmentation (tokenization) |

| Experience | Leveraging episodic and conceptual memory | Relational similarity in embedding space |

| Motivation | Value-driven, purposeful goals | Goal-agnostic statistical inference |

| Causality | Counterfactual and principled reasoning | Surface-level associations and patterns |

| Metacognition | Uncertainty monitoring and belief revision | Forced confidence and hallucinations |

| Value | Derived from identity, stakes, and norms | Next-token prediction, absent normativity |

These fault lines create a condition termed “Epistemia,” where linguistic plausibility becomes a structural substitute for epistemic evaluation.6 In professional workflows, this manifests as the “feeling of knowing” without the actual labor of judgment, leading to outputs that appear authoritative but lack the underlying machinery of reliability.

The Evolution of the Hybrid Model

AI historically shifted from symbolic systems—which treated intelligence as rule-based manipulation of explicit symbols—to modern generative transformers that synthesize text directly.6 While symbolic AI was transparent and rule-bound, it was brittle. Modern LLMs are expressive and adaptable but remain “black boxes” that prioritize plausibility over deterministic accuracy. Hybrid Intelligence attempts to bridge this gap by creating a “centaur model” of work.10 In this model, the machine provides the computational power, while the human provides the directional “Vibe”—the intent, context, and ethical framework that the machine lacks. Effective hybridization requires “double literacy”: an understanding of both human cognitive processes and algorithmic mechanisms.5

The Friction: The Tragedy of the Professional Commons

The current crisis in H-AI implementation arises from the “Tragedy of the Commons,” where individual productivity gains from AI-generated content externalize costs onto the broader professional community.11 Because AI can generate thousands of plausible-looking contributions faster than a human can verify them, the review layer of society—editors, code maintainers, and peer reviewers—is becoming overwhelmed.3

The Proliferation of AI Slop

“AI Slop” is low-quality, derivative digital content produced in quantity by artificial intelligence.11 It is characterized by three prototypical properties: superficial competence, asymmetry of effort, and mass producibility.3

| Property of AI Slop | Description | Impact on Workflow |

|---|---|---|

| Superficial Competence | A veneer of quality that belies a lack of substance | Increases review friction and trust erosion |

| Asymmetry of Effort | Creation requires vastly less labor than review | Overwhelms maintainers and reviewers |

| Mass Producibility | Content exists within a high-volume ecosystem | Dilutes the value of human contributions |

One alarming instance involved an AI agent that hallucinated external services and mocked out fictional integrations, creating internally coherent but entirely fictional systems. Such failures are not mere bugs; they stem from systems that prioritize path completion over factual grounding.

The Cognitive Paradox of Assistance

AI-assisted post-editing (AIPE) research shows that while AI assistance reduces global cognitive load, it increases localized cognitive engagement at decision checkpoints.12 Humans are forced into a series of evaluation points where they must scrutinize the AI’s reasoning, inducing a “split-attention effect” that raises extraneous cognitive load and accelerates craft erosion and skill atrophy.13

The Producer-Comprehension Gap

A critical failure is the “producer-comprehension gap,” where individuals submit code or content they cannot fully explain. In software, this appears as obliviousness to existing algorithms or inability to evaluate security implications of AI-generated logic. By delivering finished output without revealing process, LLMs deprive creators of conceptual understanding required for long-term growth.14

The Locuno Synthesis: Toward a Human-in-the-Loop Framework

The synthesis of human intuition and computational power requires a structured framework that positions human judgment as primary output, not the machine’s speed. This is the Human-in-the-Loop (HITL) architecture, which ensures AI outputs remain functional, accurate, and ethical.15

Architecture of the Loop: Gatekeepers and Supervisors

Human roles shift along a spectrum of control:

- Human-in-the-Loop (Gatekeeper): AI recommends; human approves for high-stakes tasks (medical, production code).15

- Human-on-the-Loop (Supervisor): AI acts autonomously; human monitors and intervenes if system drifts.15

- Human-out-of-the-Loop (Bounded Automation): AI acts without intervention within low-risk, deterministic constraints.15

The goal is to scale human ingenuity by using AI to handle coordination taxes while focusing humans on high-leverage decisions.

Agentic Engineering vs. Vibe Coding

The shift from “Vibe Coding” to “Agentic Engineering” professionalizes H-AI. Vibe Coding is prompt-driven rapid prototyping; Agentic Engineering enforces traceability, quality gates, and audit trails by orchestrating multiple agents for implementation, testing, and security review.16

| Feature | Vibe Coding | Agentic Engineering |

|---|---|---|

| Primary Driver | Natural language prompts | Goal-driven task decomposition |

| Oversight | Minimal; trust-based | Structured; quality gates & audit trails |

| Focus | Speed and iteration | Security, scalability, and viability |

| Role of Human | Prompter/Experimenter | Architect/Orchestrator |

The Workflow Sequence: Brain-to-LLM

Neurological evidence supports a “Brain-to-LLM” sequence: starting a task unaided to establish the “Vibe” before using the machine for execution.1 Students who write unaided before revising with AI show stronger brain-wide connectivity, while those who start with AI support display lower recall and homogenized outputs.1

The Vibe Design Agentic Routine

A practical routine: Deconstructed Prototyping (human sets goals), Context-Aware Simulation (agent generates personas; human adjusts), Iterative Refinement (human edits and validates). This preserves context, reduces reasoning errors, and maintains human judgment as the arbiter of acceptability.[^35]

The Critical Reflection: Ethical and Technical Trade-offs

H-AI adoption risks eroding the right to disconnect and promoting digital presenteeism. Model collapse parallels human craft erosion: as shared resources fill with AI slop, junior practitioners train on impoverished material, threatening the talent pipeline.3

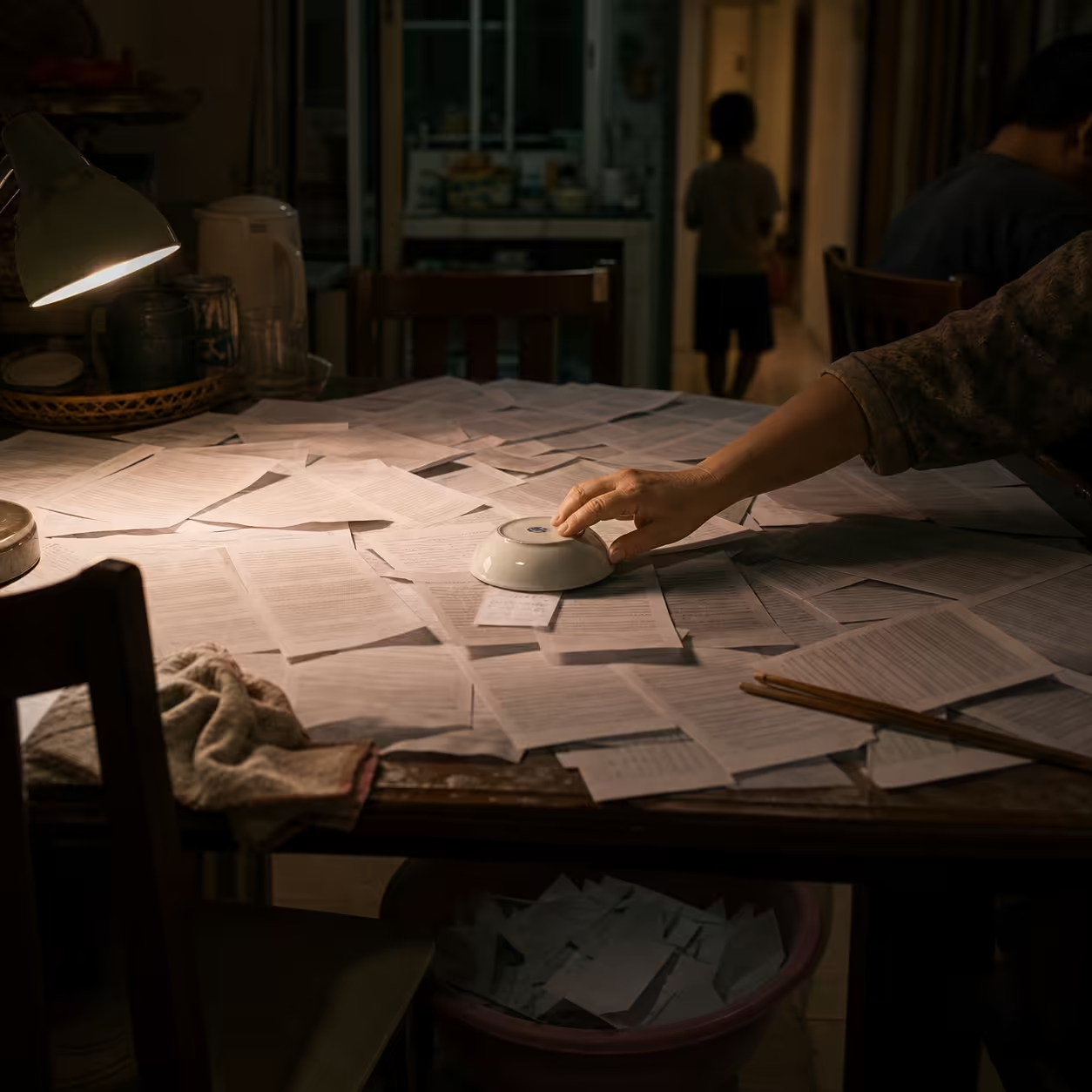

The Hidden Truth: The Ritual of Intentional Disconnection

Peak productivity requires “intentional disconnection.” This ritual restores the brain’s Default Mode Network (DMN), supporting creative insight and long-range planning.[^42] High-output builders protect 90–120 minute deep work sessions without AI to frame problems before invoking tools.[^44]

Strategies for the “Soft Disconnect”

- Morning Planning Ritual: First 2–3 hours without email, Slack, or AI tools; use for journaling and planning.[^44]

- AI-Free Creative Zones: Designate times/spaces where AI tools are disabled.[^34]

- The 30-Minute Embargo: Reduce daily screen time by 30 minutes weekly to restore sustained attention.[^46]

| Strategy | Objective | Tactical Implementation |

|---|---|---|

| Deep Work Blocks | Restore DMN activity | 90-120 min sessions without interruptions |

| Tool Sequencing | Prevent cognitive debt | Brain-to-LLM: unaided thought before AI use |

| Environmental Design | Reduce decision fatigue | Charging stations outside work/bedrooms |

| Digital Fasting | Reclaim focus | Periodic “Technology Sabbaths” |

Successful disconnection lets creators “unplug to reignite their creative spark,” returning to work with clearer intent and greater energy.[^45]

The Horizon: Mastering Epistemic Governance

The professional of the future is an “Epistemic Governor” who manages the flow of intelligence between human and machine. Organizations that win will weave human judgment and machine intelligence into a single nervous system, privileging boundaries between online and offline activities, face-to-face interactions, and mindful technology use. H-AI is a resilience amplifier only when it frees mental resources for growth rather than inducing cognitive dependency.

For those who want to quantify readiness, the next step is the Locuno Epistemic Audit—a diagnostic to measure cognitive load balance and surface workflow fault lines. Mastering the silence is the first step to mastering the code.

References

Footnotes

-

MIT study shows ChatGPT reshapes student brain function and reduces creativity when used from the start - EdTech Innovation Hub, accessed 29/04/2026. https://www.edtechinnovationhub.com/news/mit-study-shows-chatgpt-reshapes-student-brain-function-and-reduces-creativity-when-used-from-the-start ↩ ↩2 ↩3

-

What Is Vibe Coding? The New AI-Driven Philosophy Changing How Software Is Built, accessed 29/04/2026. https://sam-solutions.com/blog/what-is-vibe-coding/ ↩

-

AI Slop and the Software Commons - arXiv, accessed 29/04/2026. https://arxiv.org/html/2604.16754v1 ↩ ↩2 ↩3 ↩4

-

Defining Hybrid Intelligence for Modern Business Transformation | XCalibre Training Centre, accessed 29/04/2026. https://xcalibretraining.com/blog/defining-hybrid-intelligence-for-modern-business-transformation/ ↩

-

Why Hybrid Intelligence Is the Future of Human-AI Collaboration - Knowledge at Wharton, accessed 29/04/2026. https://knowledge.wharton.upenn.edu/article/why-hybrid-intelligence-is-the-future-of-human-ai-collaboration/ ↩ ↩2

-

Epistemological Fault Lines Between Human and Artificial Intelligence - arXiv, accessed 29/04/2026. https://arxiv.org/html/2512.19466v1 ↩ ↩2 ↩3 ↩4

-

The Stochastic Walk metaphor and token prediction mechanisms. ↩

-

Discussion of plausibility vs truth in generative models. ↩

-

Human cognitive grounding and multimodal perception studies. ↩

-

Centaur model and agentic engineering discussion. ↩

-

“An Endless Stream of AI Slop”: The Growing Burden of AI-Assisted Software Development, accessed 29/04/2026. https://arxiv.org/html/2603.27249v1 ↩ ↩2

-

IMpact of explainable AI on cognitive load studies and AIPE research. ↩

-

AI Slop Codebook Visualizer and mass-producibility references. ↩

-

Producer-comprehension gap literature and skill erosion analysis. ↩

-

Agentic Engineering vs Vibe Coding analysis. ↩

Published at: Apr 29, 2026 · Modified at: May 5, 2026

Related Posts

Digital Minimalism at Work: How to Protect Your Focus in the Age of AI Noise

In the Age of AI Slop, Curation Is the New Superpower