Infocalypse Has Arrived

In 2026, Infocalypse is no longer a speculative warning. It is here.

With more than 90% of digital content now carrying signs of AI intervention or full synthetic generation, reactive detection is failing badly. The new defensive frontier is no longer about asking whether something merely looks real. It is about building a Trust Supply Chain: a provenance layer that binds data to its history from the moment of capture.

In this era, trust is no longer the default. It must be proven.

Deconstruction: The First Principles of Digital Provenance

To defend against synthetic media, we have to reduce the digital artifact to its ground truth.

A real photograph begins with physical light striking a silicon sensor. That process leaves behind a unique silicon fingerprint, often modeled through Photo Response Non-Uniformity, or PRNU.

By contrast, generative architectures such as diffusion models bypass the physical world entirely. They synthesize pixels from statistical distributions in latent space. The result can be visually perfect while remaining causally disconnected from a specific time, place, and device.

Traditional metadata such as EXIF is now a weak proxy for truth because social platforms act as context strippers: they aggressively compress files and remove metadata to optimize bandwidth. That vacuum is exactly where deepfakes thrive.

| Media Property | Authentic Capture (Sensor-Rooted) | Synthetic Generation (Model-Based) |

|---|---|---|

| Origin point | Physical photons on a silicon sensor | Statistical sampling from latent space |

| Hardware artifacts | Unique PRNU fingerprint | Generator artifacts, which fade over time |

| Verification basis | Cryptographic proof at capture time | Probabilistic artifact detection |

| Resilience to compression | High when provenance standards exist | Low, as traces are lost when compressed or forwarded |

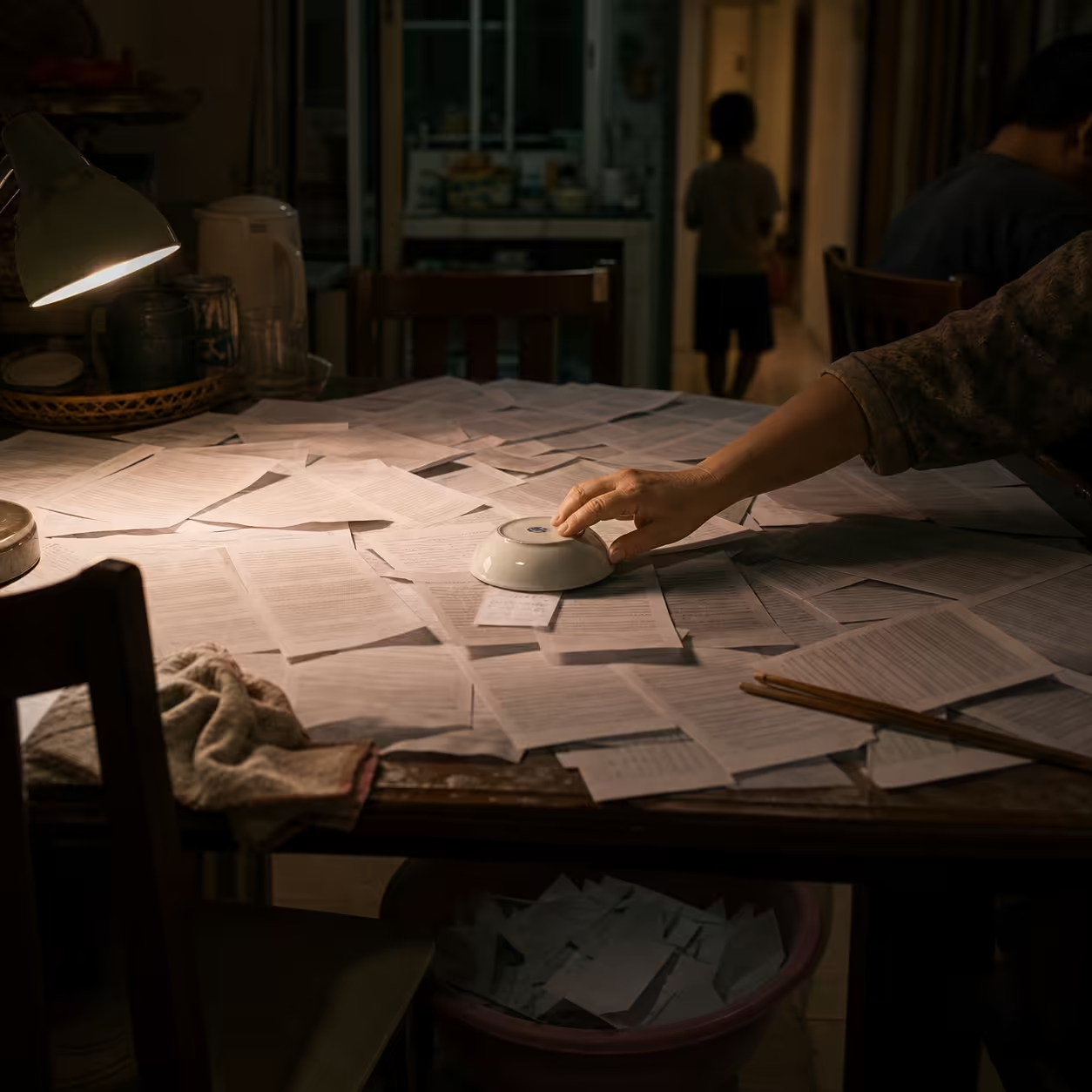

This crisis is not a failure of our eyes. It is a failure of infrastructure. We are using 20th-century assumptions of “seeing is believing” to navigate a 21st-century environment of systematic deception.

The Friction: The Rise of Epistemic Gaslighting

The current defense pattern - using one AI model to catch another - has hit a terminal bottleneck. Detectors return uncertainty, not certainty. A result like “82% synthetic origin” may sound technical, but in legal or operational settings it is a liability, not an asset.

That ambiguity fuels the Liar’s Dividend: powerful actors can dismiss authentic evidence as deepfake simply by exploiting public skepticism. We have entered the age of epistemic gaslighting, where synthetic media can implant false memory, distort perception, and push communities into tribal truth chambers.

Common failure modes in current defenses

- Lab-to-deployment gap: detectors may reach 95% on benchmark data, then collapse to 45-50% in the wild because of generator mismatch.

- Compression barrier: images and video forwarded through Zalo, LinkedIn, or other compression pipelines lose the microscopic signals needed for verification.

- Adversarial robustness: attackers inject invisible perturbations designed to fool detector networks.

The Synthesis: A Trust Supply Chain for Reality Verification

The Locuno Synergy Framework proposes a shift from passive detection to proactive provenance: a stack of physical, cryptographic, and human evidence.

Layer 1: Hardware-rooted trust

The strongest point of verification is the point of capture.

Signing Right Away (SRA) is an architecture in which trust is established inside the hardware itself.

SpotWize is a practical example of this layer. Unlike ordinary upload apps, SpotWize operates as a Reality-First application: it forces direct capture and blocks gallery uploads so the silicon fingerprint of the image is preserved at the source. The data being processed is exactly the data the sensor captured, with no intermediate software tampering.

Layer 2: Decentralized attestation

C2PA’s weakness is that it is metadata-centric, and metadata can be stripped.

The Birthmark Standard addresses this through consortium blockchain. Instead of depending on metadata inside the file, the system hashes pixel data and stores the record on a distributed ledger operated by trust brokers such as Reuters or the BBC. That enables metadata-independent verification: a user can hash a compressed image and query the blockchain to verify its hardware attestation.

Layer 3: High-friction human discernment

Technology can provide a nutrition label for information. Human intuition provides the final gate.

This is best illustrated by the Ferrari Challenge. In 2024, a Ferrari manager received a deepfake video call mimicking CEO Benedetto Vigna in a convincing southern Italian accent, asking for help with a secret acquisition.

The manager did not use a detector. Instead, he applied a high-friction challenge: “Benedetto, what was the title of the book you recommended to me a few days ago?” The attacker had no access to that offline context and hung up immediately.

Case in Point: When Friction Saves the Truth

Effective defense is not just software. It is behavioral design.

In practice, the strongest verification steps are often the slowest ones:

- force capture on-device instead of allowing gallery uploads;

- compare provenance instead of relying on intuition;

- ask a reverse question that is not in the public corpus;

- require a link to physical origin or a clear custody chain.

Friction makes attack more expensive than defense.

Critical Reflection: Trust Nodes and Epistemic Tribalism

As the open web degrades into a low-trust slop zone, value migrates into Trust Nodes.

In 2026, your value is no longer defined only by what you know. It is also defined by which verification network you belong to.

That creates epistemic tribalism: communities bound by shared verification protocols and cognitive priors rather than geography. They share trust more readily than they share truth.

System-level attack classes

| Risk Category | Technical Mechanism | Impact on Trust |

|---|---|---|

| Epistemic gaslighting | False memory implantation via deepfake evidence | Eyewitness recall accuracy drops by about 30% |

| RAG poisoning | Injecting adversarial noise into retrieval datasets | Enterprise knowledge bases return hallucinated truth |

| Scene spoofing | Recording a screen displaying synthetic content | Bypasses single-device provenance chains |

The Horizon: A Strategy for the Age of Discernment

Reality verification is moving from a niche technical discipline to a universal survival skill.

For organizations, the operating routine must be provenance-first:

-

Epistemic infrastructure. Deploy TEE-based capture devices and hardware-enforced apps such as SpotWize for any high-stakes data capture.

-

Adversarial literacy. Train people to use high-friction verification questions grounded in private context rather than looking only for visual glitches.

-

Reputational sovereignty. Build audience ownership through channels that are not easily scrappable. In a world of synthetic smoothness, proof of physical work becomes the premium differentiator.

The future of trust lies in the fusion of silicon and soul: hardware provides proof of capture, blockchain provides history, and human intuition provides final judgment.

The friction of verification is our last line of defense against synthetic smoothness.

References

- Futurism. Experts: 90% of Online Content AI-Generated.

- ArXiv:2602.04933. The Birthmark Standard.

- ArXiv:2510.09656. Signing Right Away (SRA).

- OSF (2026). Agentic Parapsychological Ψ-Cybercrime.

- ArXiv:2604.17023. The Verification Bottleneck.

- Bloomberg / CyberGuru. Ferrari CEO Deepfake Incident.

Sources

- https://futurism.com/the-byte/experts-90-online-content-ai-generated

- https://arxiv.org/pdf/2602.04933

- https://arxiv.org/pdf/2510.09656

- https://osf.io/download/689472528fcaacf9006b0c18/

- https://arxiv.org/pdf/2604.17023

- https://www.cyberguru.it/en/2024/08/19/deepfake-ferrari-scam-foiled/

- https://www.trantorinc.com/blog/digital-provenance-ai

- https://erichorvitz.com/Authentication_media_provenance_AMP.pdf

- https://www.it-daily.net/shortnews-en/how-a-clever-ferrari-manager-exposed-a-deepfake

- https://danielbmarkham.com/the-new-news/newspaper-morgue/2026-01/newspaper-research

- https://scholarlycommons.law.wlu.edu/cgi/viewcontent.cgi?article=1190&context=wlulr-online

- https://www.researchgate.net/publication/396459532_Signing_Right_Away

- https://rjwave.org/ijedr/papers/IJEDR2504621.pdf

- https://whoiswenz1k.medium.com/you-cant-tell-what-s-real-anymore-81c8b4be328f

- https://opendatascience.com/nina-schick-expert-90-of-online-content-could-be-generated-by-ai/

Published at: Apr 25, 2026 · Modified at: May 5, 2026

Related Posts

Digital Minimalism at Work: How to Protect Your Focus in the Age of AI Noise

In the Age of AI Slop, Curation Is the New Superpower