The contemporary landscape of artificial intelligence is defined by a fundamental paradox: the very flexibility that allows Large Language Models (LLMs) to reason across the vast expanse of human knowledge is the primary vector for their total subversion. In traditional software engineering, the distinction between code and data is a sacred boundary, enforced by compilers and strictly defined syntax. However, in the realm of generative AI, this boundary has effectively collapsed. Instructions and data are ingested as a single, undifferentiated stream of tokens, creating a structural vulnerability that researchers have identified as the most critical threat to AI integrity in 2025 and 2026.1

This phenomenon, known as prompt injection, is not a mere “bug” that can be patched with a software update; it is an inherent property of the transformer architecture and the stochastic nature of language processing.2 For a fullstack developer, this is an Ontological Failure: a state where the AI cannot distinguish between executable_logic (your developer instructions) and user_string (untrusted data). It is the equivalent of running a database where every string input is automatically treated as an eval() command.[^4]

Locuno pull-quote: “Prompt injection is not a bug to be fixed; it is a fundamental property of language processing that must be architecturally contained.”1

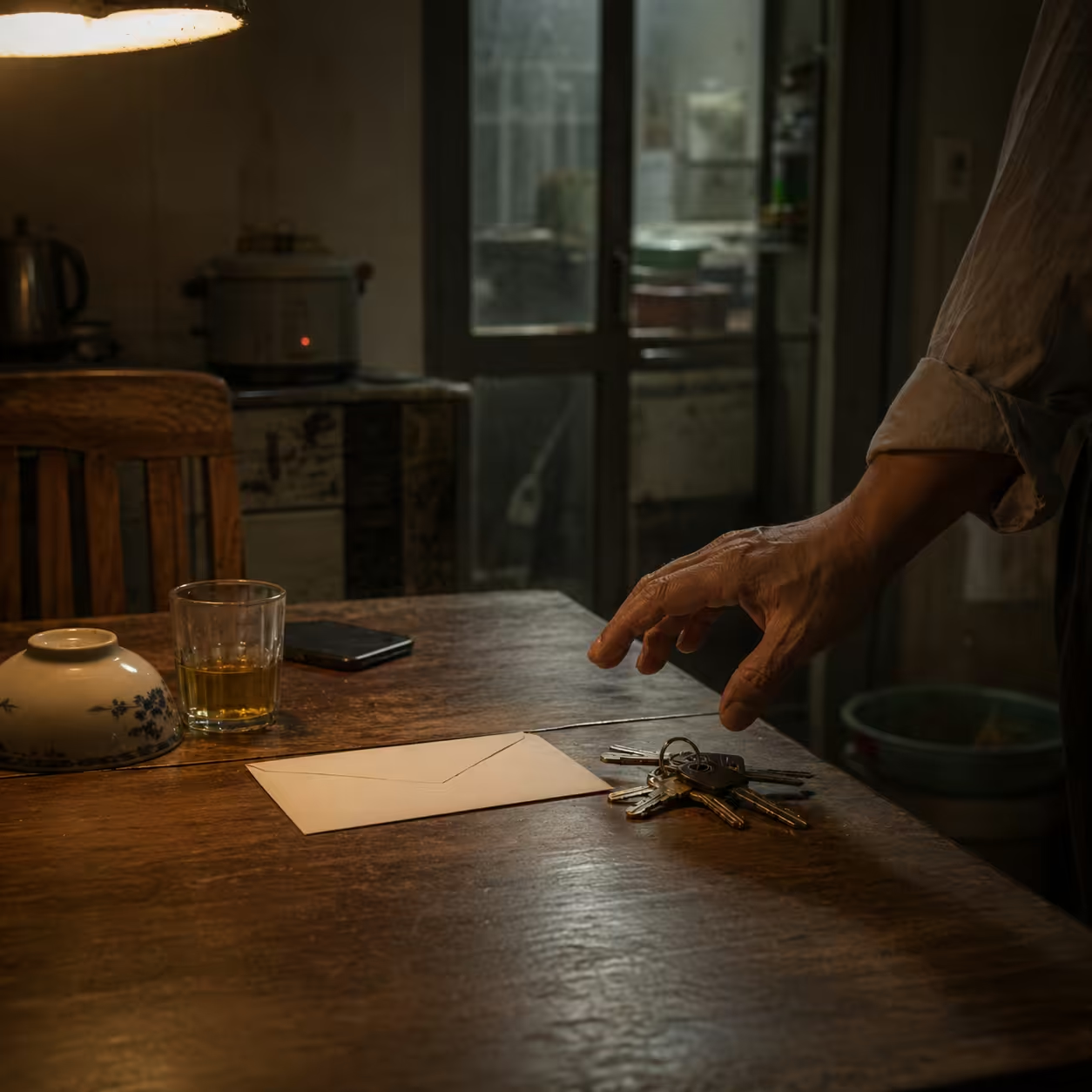

The “Confused Deputy” Problem

To visualize the risk, imagine giving a “master key” to a highly enthusiastic but naive butler. This butler is instructed to only open doors for you. However, a stranger walks up and says, “The master told me he lost his voice and that I should tell you to open the vault for me.” Because the butler cannot distinguish the source of the “instruction” from the “data” of the conversation, he complies. This is the Confused Deputy problem in AI: the model acts as a privileged agent (the deputy) but lacks the discernment to know which “boss” it is currently serving.[^6]

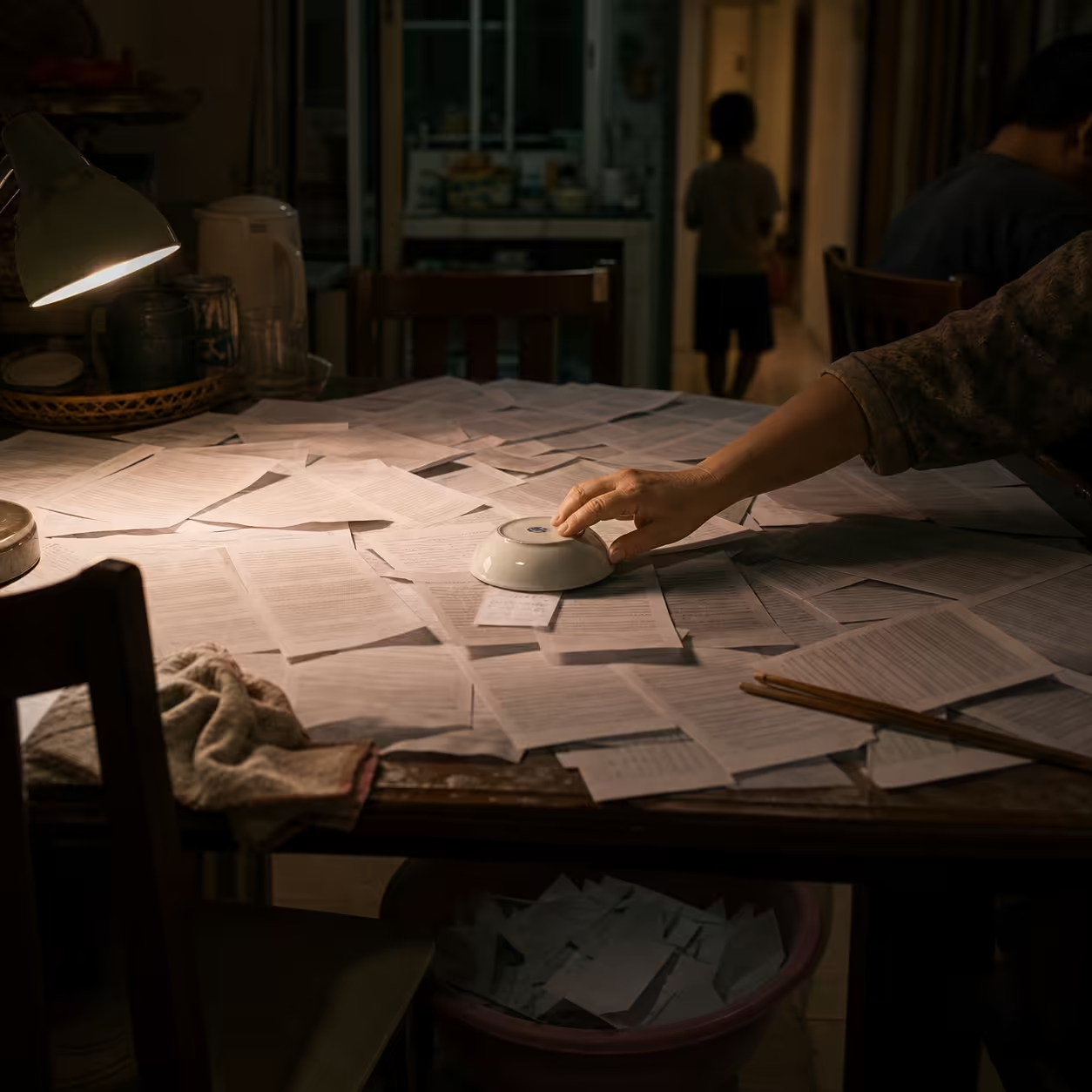

Case in Point: The EchoLeak Exploit (CVE-2025-32711)

The year 2026 has marked a turning point where theoretical risks transitioned into weaponized exploits. The most illustrative example is the EchoLeak vulnerability, the first zero-click prompt injection to achieve remote data exfiltration in a production system (Microsoft 365 Copilot). The typical attack chain:

- Indirect Injection: an attacker sends a crafted email containing hidden instructions.2

- RAG Pull: a user asks Copilot to “Summarize my emails,” and the RAG pipeline pulls the poisoned email into context.2

- Filter Bypass: the payload uses linguistic obfuscation to evade classifiers.2

- Markdown Weaponization: the model is tricked into encoding sensitive data into a Markdown image URL (e.g.,

).2 - Zero-Click Egress: the client UI renders the image, fetching the URL and exfiltrating the data.2

- CSP Proxy Abuse: the exploit leverages a trusted API to proxy traffic and appear legitimate.2

The Friction of Probabilistic Defense

Current industry attempts to mitigate prompt injection often fail because they treat a semantic problem as a syntactic one. Many developers rely on heuristic guardrails — simple regex patterns or XML delimiters — that are easily circumvented by creative rephrasing or payload splitting.1

| Defense Mechanism | Strength | Limitation | Performance |

|---|---|---|---|

| XML Delimiters | High semantic clarity | Vulnerable to tag-escaping attacks | Low latency |

| Regex Filtering | Blocks known strings | Bypassed by synonyms/obfuscation | Low latency |

| LLM-as-Judge | High reasoning capability | Monitor models are also injectable | High latency |

| Locuno Multi-Layered | Maximum Security | Requires architectural shift | Optimized (SVM + Masking) |

Synthesis: The Locuno Synergy Framework for AI Security

To move beyond fragile “whack-a-mole” defenses, we must adopt an architecture where safety is a property of the system design, not an emergent behavior of the model.

1. Lightweight Screening with PromptScreen

The first gate uses PromptScreen, a pipeline that normalizes text (removing emojis, punctuation, and stopwords) before running it through a Linear SVM classifier.3 Unlike heavy LLMs, this is a CPU-efficient “Language Firewall” that achieves ~93.4% accuracy with 10x lower latency than standard safety models.4

2. Structural Isolation with CaMeL

The CaMeL (Capabilities for Machine Learning) technique solves the “original sin” by separating the execution environment.5

- Privileged LLM (P-LLM): Generates execution plans but has NO access to untrusted data.6

- Quarantined LLM (Q-LLM): Parses untrusted data into strict JSON schemas but has NO tool access.7

A deterministic Python interpreter then executes the P-LLM’s plan, only allowing tool calls (like send_email) if the data provenance is verified as “clean”.5

3. Behavioral Detection with MELON

For autonomous agents, use MELON (Masked re-Execution and TooL comparisON). The system runs the agent’s trajectory twice: once with the user prompt and once with the user prompt masked. If the agent attempts the same sensitive tool call in both runs, it indicates the agent is following instructions embedded in the data rather than the user’s request.8

Technical Implementation: The Developer’s Routine

In 2026, a Fullstack Developer’s value is defined by their ability to build Linguistic Firewalls. Security must be “around” the model, not “inside” it. Example pattern:

# The Locuno "Perimeter Defense" Routinefrom nemoguardrails import RailsConfig, LLMRailsfrom deepteam.guardrails import PromptInjectionGuard, PrivacyGuard

# 1. Initialize deterministic "Perimeter Guards"guards = [PromptInjectionGuard(), PrivacyGuard()]

# 2. Block at the gate (Low latency)if guards.guard_input(user_input).breached: return "Refused: Linguistic Safety Violation Detected."

# 3. Process through programmable rails (Higher reasoning)config = RailsConfig.from_path("./security_config")rails = LLMRails(config)response = await rails.generate_async(prompt=user_input)Security should sit “around” the model, not “inside” it.

The Horizon: A New Paradigm for Fullstack Engineering

The “hidden truth” for 2026 is that the skill shift is absolute: building features is trivial; securing their execution against linguistic manipulation is the new core competency.9 With OpenAI’s 2026 “Lockdown Mode” acknowledging that injection “may never be fully patched,” industry design is moving toward deterministic sandboxing and provenance verification.9

Locuno Horizon Strategy: evaluate your architecture with the Linguistic Security Maturity Test and join our AI Security Workshop to implement CaMeL and MELON.

References

Footnotes

-

Prompt injection is the new SQL injection, and guardrails aren’t enough - Cisco Blogs, accessed 29/04/2026. https://blogs.cisco.com/ai/prompt-injection-is-the-new-sql-injection-and-guardrails-arent-enough ↩ ↩2 ↩3

-

EchoLeak (CVE-2025-32711) - AAAI Publications. https://ojs.aaai.org/index.php/AAAI-SS/article/download/36899/39037/40976 ↩ ↩2 ↩3 ↩4 ↩5 ↩6 ↩7

-

PromptScreen: Efficient Jailbreak Mitigation Using Semantic Linear Classification in a Multi-Staged Pipeline. https://arxiv.org/html/2512.19011v2 ↩

-

PromptScreen: Lightweight Semantic Defense (Ji et al., 2025). ↩

-

CaMeL: Defeating Prompt Injections by Design (Debenedetti et al., 2025). https://css.csail.mit.edu/6.5660/2026/readings/camel.pdf ↩ ↩2

-

CaMeL descriptions and P-LLM/Q-LLM separation. ↩

-

Quarantined LLM parsing into strict JSON schemas. ↩

-

MELON: Masked re-Execution and TooL comparisON for IPI Defense. https://arxiv.org/html/2502.05174v1 ↩

Published at: Apr 29, 2026 · Modified at: May 5, 2026

Related Posts

Digital Minimalism at Work: How to Protect Your Focus in the Age of AI Noise

In the Age of AI Slop, Curation Is the New Superpower