The AI ROI Paradox

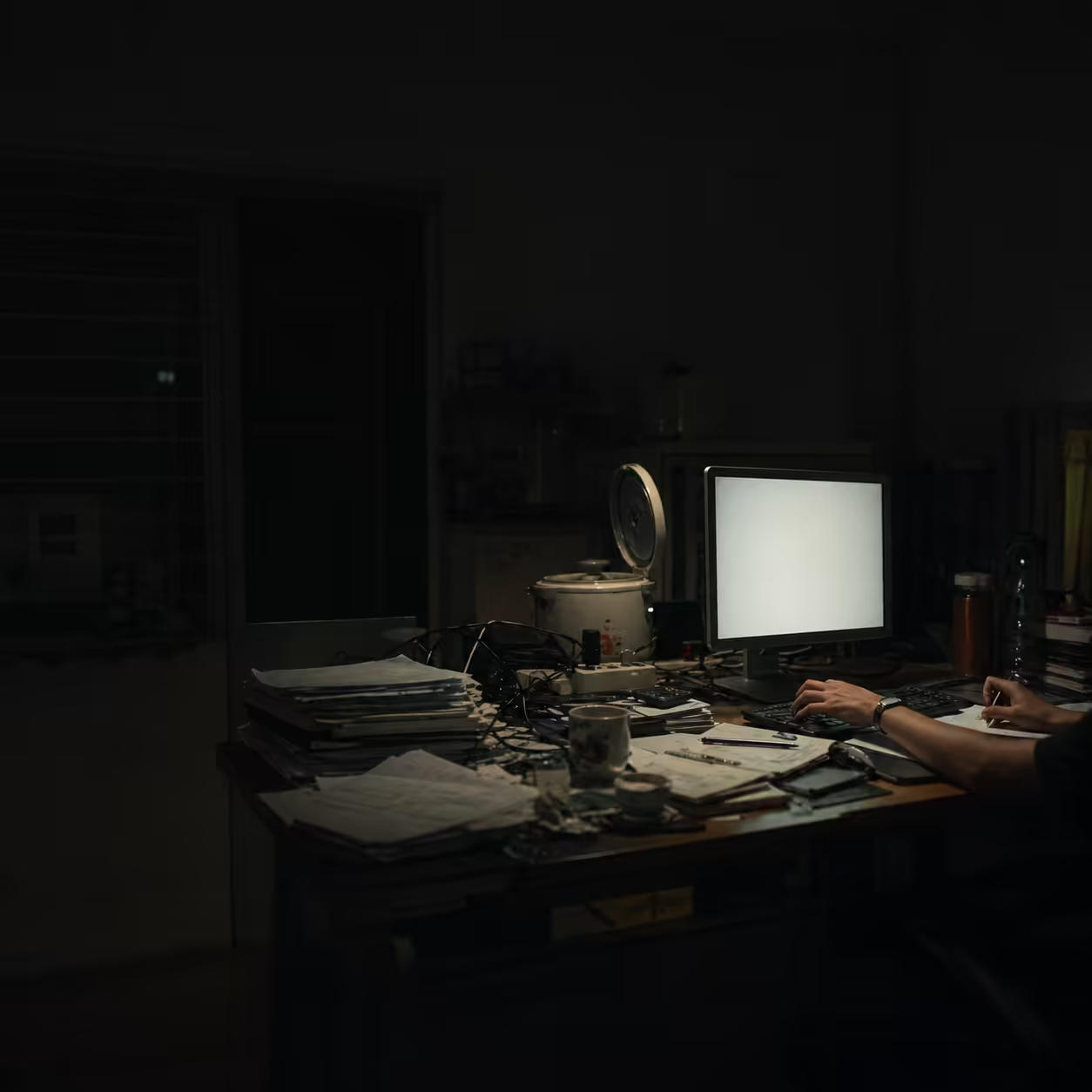

Boardrooms across the Global 2000 are facing an uncomfortable morning-after.

After a collective spending wave on generative AI, executives are staring at P&L statements and asking one blunt question: where did the money go?

While most enterprises now claim some form of AI usage, the honeymoon phase for large language models (LLMs) is over. We are in the era of the GenAI Divide.

- Most organizations remain trapped in expensive AI theater: flashy pilots that never reach durable production impact.

- A small elite treats AI as infrastructural capability and captures outsized gains in both revenue growth and cost reduction.

At Locuno, our thesis is straightforward: the core failure is rarely the model itself. The failure is the architecture of work around it.

Deconstructing the Zero-Return Problem

One of the most sobering findings in enterprise AI literature is that a large share of pilots fail to move quarterly financial outcomes in a measurable way.

This is not just low return. In many cases, it is operationally close to zero.

A recurring root cause is the Learning Gap:

- AI systems are deployed as isolated assistants rather than integrated operating components.

- Feedback loops are weak or absent.

- The system does not learn fast enough from company-specific context.

- Value creation remains disconnected from core workflows where margins are actually won or lost.

The Implementation Tax

Even teams that escape pilot purgatory often hit another wall: integration drag.

Across sectors, organizations report an Innovation J-Curve dynamic: productivity appears weaker during the early adoption phase as firms absorb platform, talent, and workflow redesign costs.

This creates a practical risk for leadership teams:

- If expectations are set for instant ROI, projects are cancelled in the trough.

- If implementation tax is planned as a temporary phase, compounding gains can emerge later.

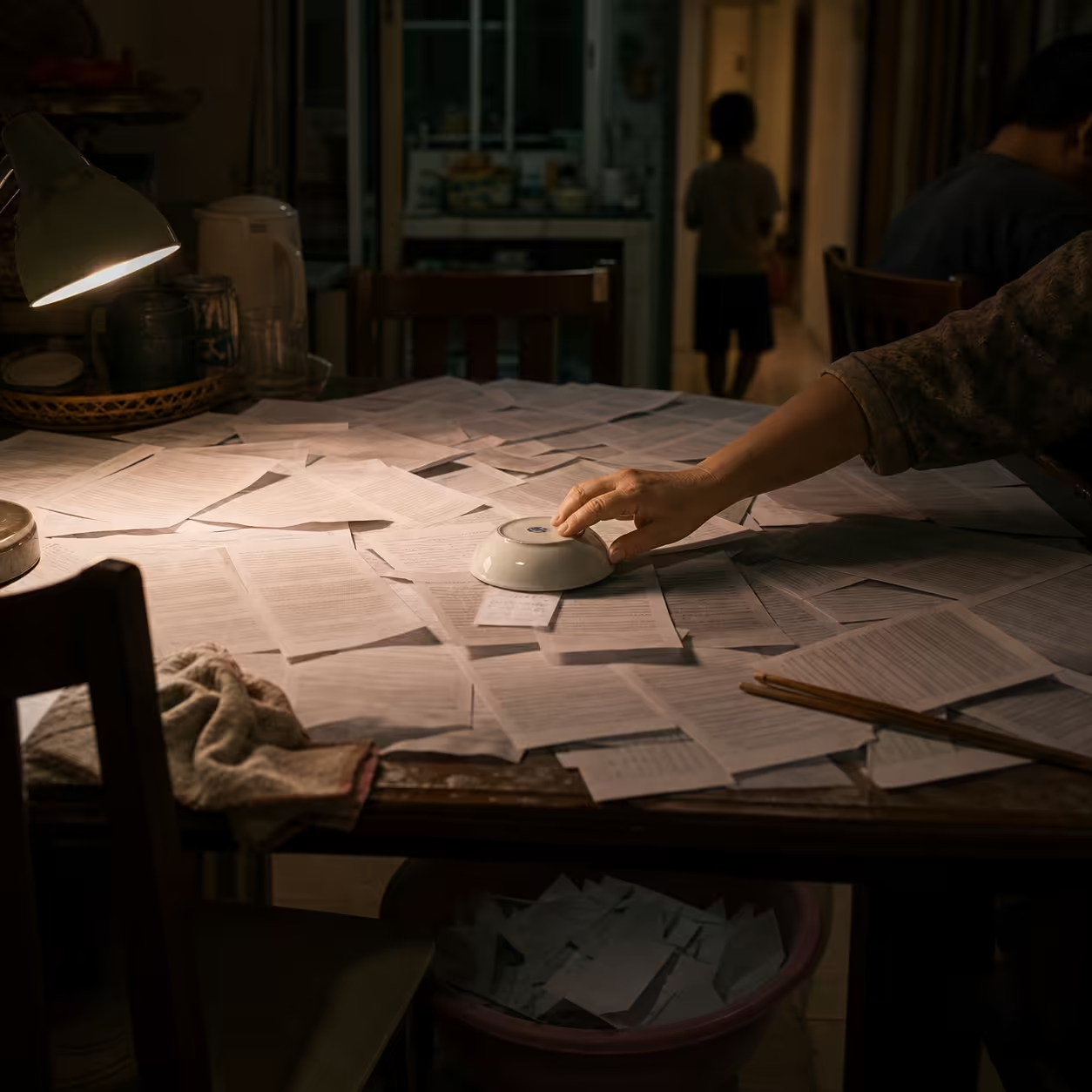

The Hidden Drain: Oscillation Fatigue

Most dashboards track time saved. Very few track cognitive cost.

In AI-augmented workflows, human labor shifts from creator to curator. That can increase output speed, but it can also intensify mental switching.

Typical loop:

- Do the work.

- Correct model errors or hallucinated output.

- Re-contextualize output for business constraints.

- Return to original reasoning thread.

This oscillation degrades concentration and deep judgment.

A frequent pattern in practice is the 70/30 illusion:

- AI gets teams to a plausible 70% quickly.

- The final 30% verification burden is long, expensive, and cognitively demanding.

If your dashboard ignores this burden, you may optimize machine throughput while silently eroding human sustainability.

From Horizontal Slop to Vertical Value

A common executive trap is the Horizontal Fallacy: buying general-purpose copilots for everyone and expecting broad, visible savings.

In many cases, gains are diffused and difficult to monetize.

A stronger pattern is Vertical AI:

- Build systems tightly aligned to specific business bottlenecks.

- Anchor on proprietary, high-quality internal data.

- Optimize for one measurable outcome before scaling scope.

Lesson: value is rarely in generic fluency. Value is in domain-grounded reliability.

The Locuno ROI Dashboard: Metrics That Matter

A serious AI dashboard must track deltas between baseline process economics and AI-augmented economics.

1. Hard Financial KPIs

| KPI | Business Relevance |

|---|---|

| Cost per productive outcome | Total process cost divided by successful transactions. If a workflow drops from $45 to $12 with quality held constant, that is real ROI. |

| FTE equivalent saved | Aggregate validated time saved divided by average FTE hours, used for strategic redeployment rather than vanity time claims. |

| Shadow AI discovery | Measures unofficial tool usage. If most employees bypass enterprise tools, official ROI reporting is distorted. |

2. Human Stability Pillar

To prevent oscillation fatigue from wiping out gains, track:

- Cognitive Load Index: mental demand density in AI-assisted roles.

- TARAI-oriented governance indicators: transparency, accountability, recordability/documentability, and explainability.

- Moral Dissonance Frequency: how often professionals must execute black-box recommendations they do not trust.

The Horizon: How the Top 5% Win

Leading firms do not treat AI savings as found money. They reinvest into modular, interoperable foundations.

They are also shifting from chatbot-centric deployments toward agentic systems that can perceive, plan, and act within governed boundaries.

The strategic difference is compounding architecture, not one-off tooling.

Strategic Recommendations

-

Stop bolt-on thinking. Apply a 10-20-70 allocation heuristic: roughly 10% model selection, 20% data/platform readiness, 70% people-and-process redesign.

-

Redesign for bounded autonomy. If every output requires full manual rewrite, you do not have an asset. Target workflows where AI can produce one-click diagnostics that humans verify quickly.

-

Budget the implementation tax explicitly. Set expectations for a 12-18 month ramp before net-positive unit economics in many enterprise contexts.

-

Instrument cognitive sustainability. Treat burnout, trust, and review load as first-class economic variables, not HR side notes.

The GenAI Divide is not primarily a technical fork. It is a leadership mandate.

The question for every board is simple: does your dashboard reveal a path to durable infrastructural advantage, or just document another expensive experiment?

References

- MIT Media Lab discussion and secondary analyses on the GenAI Divide and pilot-to-P&L failure patterns (2025).

- BCG reports on AI value concentration and widening performance gaps (2025).

- ArXiv analysis on implementation-tax dynamics in regulated sectors (2026).

- ArXiv studies on developer productivity and spurious speed effects in GenAI workflows.

- Research and practitioner sources on human-AI collaboration metrics, cognitive load, and enterprise ROI instrumentation.

Sources

- https://sranalytics.io/blog/why-95-of-ai-projects-fail/

- https://www.sundeepteki.org/blog/the-genai-divide-why-95-of-ai-investments-fail

- https://www.bcg.com/publications/2025/closing-the-ai-impact-gap

- https://aimsys.us/blog/measuring-ai-roi-and-business-value

- https://www.duperrin.com/english/2025/12/08/impacy-ai-transformation-bcg-mckinsey/

- https://www.bcg.com/publications/2025/are-you-generating-value-from-ai-the-widening-gap

- https://www.demandlab.com/resources/blog/5-takeaways-report-on-the-state-of-ai-in-business/

- https://arxiv.org/pdf/2510.24265

- https://arxiv.org/html/2602.02607v1

- https://seosherpa.com/generative-ai-statistics/

- https://medium.com/@Fransantolo/95-of-corporate-generative-ai-projects-fail-mit-study-finds-47ad5d50db32

- https://medium.com/illumination/beyond-productivity-new-metrics-for-human-ai-collaboration-a22f0e18d7cc

- https://arxiv.org/html/2510.24265v2

- https://arxiv.org/html/2510.24265v1

- https://www.fullstack.com/labs/resources/blog/generative-ai-roi-why-80-of-companies-see-no-results

- https://www.softermii.com/blog/artificial-intelligence/how-to-measure-roi-from-ai-projects-kpis-frameworks-and-templates

- https://www.tandfonline.com/doi/full/10.1080/10447318.2026.2628994

- https://www.ere.net/articles/why-ai-efficiency-can-lead-to-burnout-in-recruiting

- https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

- https://www.brianheger.com/the-reinvention-of-the-chro-in-an-ai-driven-enterprise-bcg/

- https://nmsconsulting.com/what-does-an-artificial-intelligence-consultant-do/

- https://plastergroup.com/insights/business-transformation-ai-requires

Published at: Apr 25, 2026 · Modified at: May 5, 2026

Related Posts

Digital Minimalism at Work: How to Protect Your Focus in the Age of AI Noise

In the Age of AI Slop, Curation Is the New Superpower