The Orchestration Manifesto

By 2026, prompt engineering is no longer a premium skill. In many production contexts, it is a bottleneck.

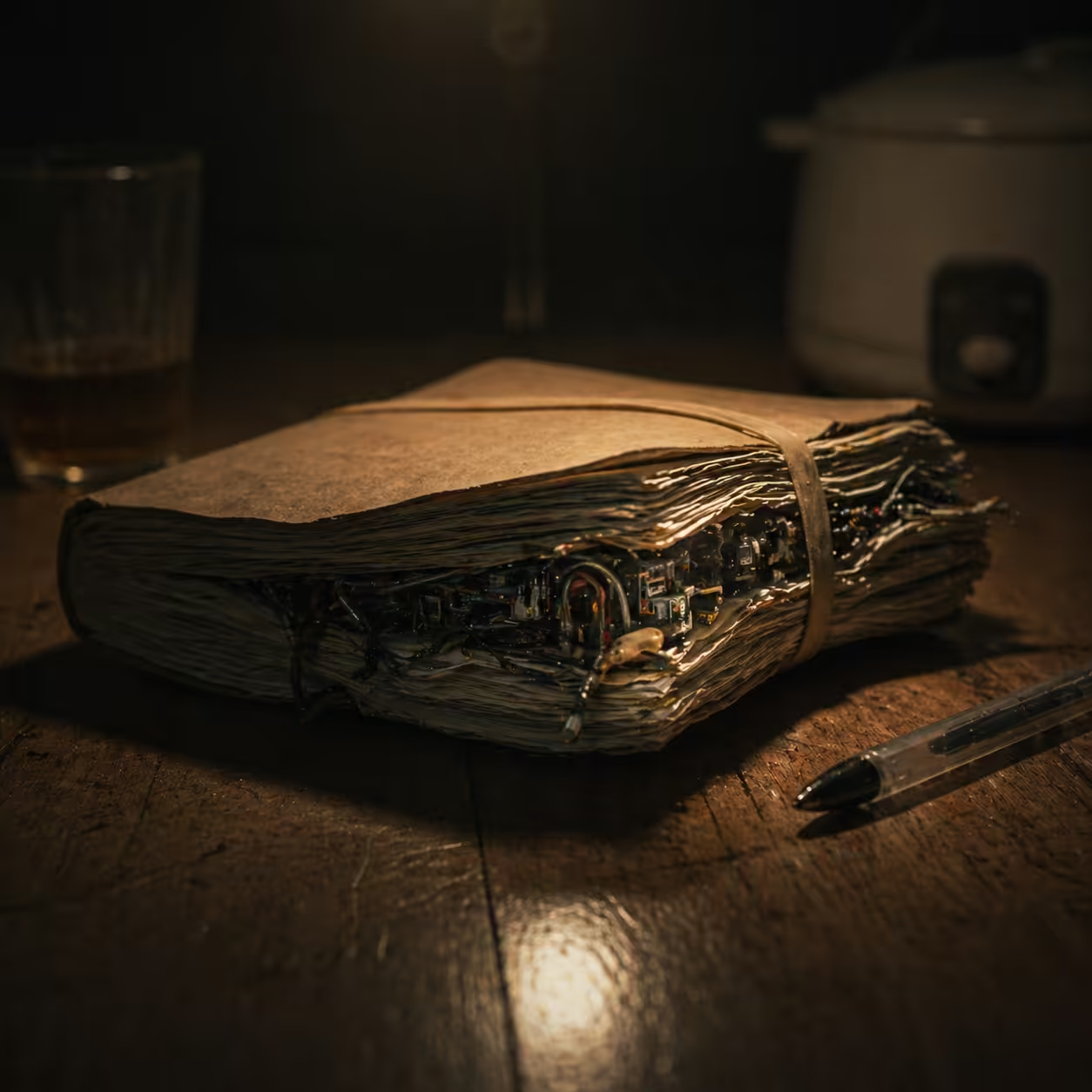

If a team is still cramming 100-page instruction sets into one chat session, it is not programming. It is probability gambling.

The industry has moved from digital incantations to architectural systems thinking. The shift from prompt-centric workflows to agentic orchestration is the sharpest platform inflection since cloud migration: from reactive completions to proactive, collaborative execution.

Deconstruction: First Principles of Agentic Logic

The single-agent paradigm fails because of compounding entropy.

Early LLM usage relied on stateless one-shot completion. That pattern is inherently brittle for deterministic enterprise workflows where reliability is non-negotiable.

The first principle of orchestration is this:

- treat the model as a stateless compute layer,

- move memory, policy, and truth constraints into system architecture,

- enforce outcomes through state-governed control paths.

For a linear chain with n steps and per-step success probability p, total success is:

P_success = p^n

Example:

n = 10p = 0.95P_success = 0.95^10 ≈ 0.598

A nominally high per-step accuracy still yields about a 40% end-to-end failure probability. This is why monolithic prompts collapse in production.

Orchestration fixes this by introducing verification loops, retries, and logic gates at each node.

| System Property | Single-Agent (SAS) | Multi-Agent Orchestration (MAS) |

|---|---|---|

| Architectural model | Monolithic, linear | Distributed, graph-based |

| Logic processing | Stochastic completion | State-machine governed |

| Context management | Saturated context window | Segmented pinned truths |

| Reliability profile | High variance | Deterministic with verification |

| Core human skill | Instruction crafting | System architecture and design |

The Friction: The Monster Prompt Failure Mode

When teams force non-linear enterprise logic into linear prompts, they create monster prompts: massive instruction blocks that attempt to cover every edge case while increasing latency and drift.

A widely discussed case is KPMG’s TaxBot journey, where a very large prompt framework accelerated draft generation but became a scalability bottleneck. Re-reading huge instruction payloads per generation cycle is expensive and unpredictable.

The lesson is architectural:

- rules should live in systems, not in ever-growing prose;

- state should be externalized and versioned;

- governance should be enforceable, not implied.

This is sovereign context in practice: business logic as a fixed constitution, not a volatile paragraph.

The Synthesis: Architecture over Instruction

Production-grade AI moves intelligence out of prompt text and into Directed Acyclic Graphs (DAGs).

In a linear prompt chain, one model attempts everything in sequence.

In an agentic DAG:

- each node is a bounded task,

- each task is executed by a specialist,

- execution order is explicit,

- parallel branches reduce end-to-end latency,

- acyclic topology prevents runaway recursive loops.

Interoperability Layer: MCP and A2A

Two protocol families are becoming foundational for enterprise orchestration.

- MCP (Model Context Protocol): standard interface between model runtimes and external tools, data systems, and business memory.

- A2A (Agent-to-Agent): standard communication contract for independent agents to coordinate tasks across heterogeneous vendors.

Practical metaphor:

- MCP is the cable between model and enterprise systems.

- A2A is the office protocol between digital workers.

Without these standards, orchestration becomes brittle integration debt.

Case in Point: The CareOps Pattern

A large healthcare network managing credentialing, compliance checks, and staff operations can expose the ROI delta of architecture.

Typical pre-orchestration profile:

- heavy manual routing,

- high review burden,

- fragmented audit trace,

- expensive context replay.

Post-orchestration pattern:

- Commander agent routes intent to parallel specialists.

- Retrieval layer fetches minimal relevant tokens instead of replaying long conversation history.

- Every decision point is logged in an auditable control graph.

Representative outcomes reported in similar transformations include steep labor-hour reduction, significant query-cost compression, and large compliance gains when governance logic is embedded at the architecture layer.

Critical Reflection: The Security Gap

Autonomy increases attack surface.

As agents gain tool access and memory persistence, organizations must secure the logic plane, not just model output.

Key risks:

- Data exfiltration through hidden instruction payloads in external content.

- Tool misuse where injected prompts trigger destructive API actions.

- Context poisoning via slow manipulation of long-lived memory state.

- RAG poisoning through tainted retrieval corpora.

Minimum controls for production:

- Human-in-the-loop for high-impact actions.

- Policy-gated tool execution.

- Immutable audit logs for all agent decisions.

- Trust boundaries for retrieval sources and memory writes.

The Horizon: Blueprint for the Agentic Enterprise

The era of the magic prompt is over.

The durable advantage now belongs to teams that can architect orchestrated systems, not merely author clever instructions.

Strategic direction for leadership:

- Shift organizational focus from doing to orchestrating.

- Reskill teams from implementation detail toward system design, verification, and governance.

- Standardize interoperability through open protocols.

- Treat architecture as the core product, with prompts as replaceable configuration.

The final frontier of machine intelligence is no longer model size.

It is architecture.

References

- Context Engineering: From Prompts to Corporate Multi-Agent Architecture (arXiv:2603.09619).

- Reasoning-aware and topology-aware multi-agent orchestration papers (arXiv).

- Enterprise reports on AI agent trends and orchestration architecture.

- KPMG TaxBot reporting and analysis on monster-prompt limitations.

- Linux Foundation and industry documentation on A2A interoperability.

- Databricks and ecosystem references on DAG design and MCP integration.

- Security surveys on prompt injection, tool misuse, and agentic threat models.

Sources

- https://github.com/orgs/community/discussions/188557

- https://arxiv.org/abs/2603.09619

- https://medium.com/@abhi_dabas/the-death-of-prompt-engineering-and-the-rise-of-ai-playbooks-85391fcc26ed

- https://www.reddit.com/r/PromptEngineering/comments/1srcdkh/prompt_engineering_is_breaking_at_scale_with_ai/

- https://cloud.google.com/resources/content/ai-agent-trends-2026

- https://dev.to/manikandan/beyond-the-prompt-why-2026-is-the-year-of-the-autonomous-ai-process-15j5

- https://www.seasiainfotech.com/blog/multiagent-systems

- https://medium.com/@harsh2013/building-enterprise-grade-multi-agent-ai-systems-a-complete-architecture-guide-c00e212b9c54

- https://www.gartner.com/en/articles/context-engineering

- https://arxiv.org/html/2510.00326v1

- https://agility-at-scale.com/ai/agents/multi-agent-systems/

- https://arxiv.org/html/2502.02533v2

- https://fast.io/resources/ai-agent-swarm-orchestration/

- https://www.theregister.com/2025/08/20/kpmg_giant_prompt_tax_agent/

- https://www.ibm.com/think/podcasts/mixture-of-experts/monster-prompt-openai-business-play-cloud-gemini-nano-banana-us-open

- https://www.brainvire.com/blog/b2b-ai-agents-marketing-sales-transformation/

- https://www.goingconcern.com/monday-morning-accounting-news-brief-california-cpa-pathways-bill-just-one-step-from-law-consultants-bsing-on-ai-9-8-25/

- https://arxiv.org/html/2603.00774v2

- https://arxiv.org/pdf/2603.09619

- https://www.firecrawl.dev/blog/agentic-ai-trends

- https://www.databricks.com/blog/what-is-dag

- https://santanub.medium.com/directed-acyclic-graphs-the-backbone-of-modern-multi-agent-ai-d9a0fe842780

- https://www.reddit.com/r/LocalLLaMA/comments/1o89qna/i_built_an_opensource_framework_for_llm_agents/

- https://www.informatica.com/blogs/disruptive-innovation-or-industry-buzz-understanding-model-context-protocols-role-in-data-driven-agentic-ai.html

- https://www.strata.io/blog/agentic-identity/practicing-the-human-in-the-loop/

- https://www.tigera.io/blog/how-ai-agents-communicate-understanding-the-a2a-protocol-for-kubernetes/

- https://generect.com/blog/what-is-mcp/

- https://www.databricks.com/blog/what-is-model-context-protocol

- https://www.linuxfoundation.org/press/a2a-protocol-surpasses-150-organizations-lands-in-major-cloud-platforms-and-sees-enterprise-production-use-in-first-year

- https://www.salesforce.com/ap/agentforce/ai-agents/agent2agent-protocol/

- https://www.prnewswire.com/news-releases/a2a-protocol-surpasses-150-organizations-lands-in-major-cloud-platforms-and-sees-enterprise-production-use-in-first-year-302737641.html

- https://www.hpcwire.com/aiwire/2026/04/09/linux-foundation-a2a-protocol-marks-one-year-with-broad-enterprise-and-cloud-adoption/

- https://github.com/anmolksachan/AI-ML-Free-Resources-for-Security-and-Prompt-Injection/blob/main/README.md

- https://ieeexplore.ieee.org/iel8/6287639/6514899/11447227.pdf

- https://www.synvestable.com/human-in-the-loop.html

- https://digitalcommons.odu.edu/cgi/viewcontent.cgi?article=1148&context=covacci-undergraduateresearch

- https://resources.anthropic.com/hubfs/2026%20Agentic%20Coding%20Trends%20Report.pdf

Published at: Apr 25, 2026 · Modified at: May 5, 2026

Related Posts

Digital Minimalism at Work: How to Protect Your Focus in the Age of AI Noise

In the Age of AI Slop, Curation Is the New Superpower