The Answer Is Cheap, Agency Is Not

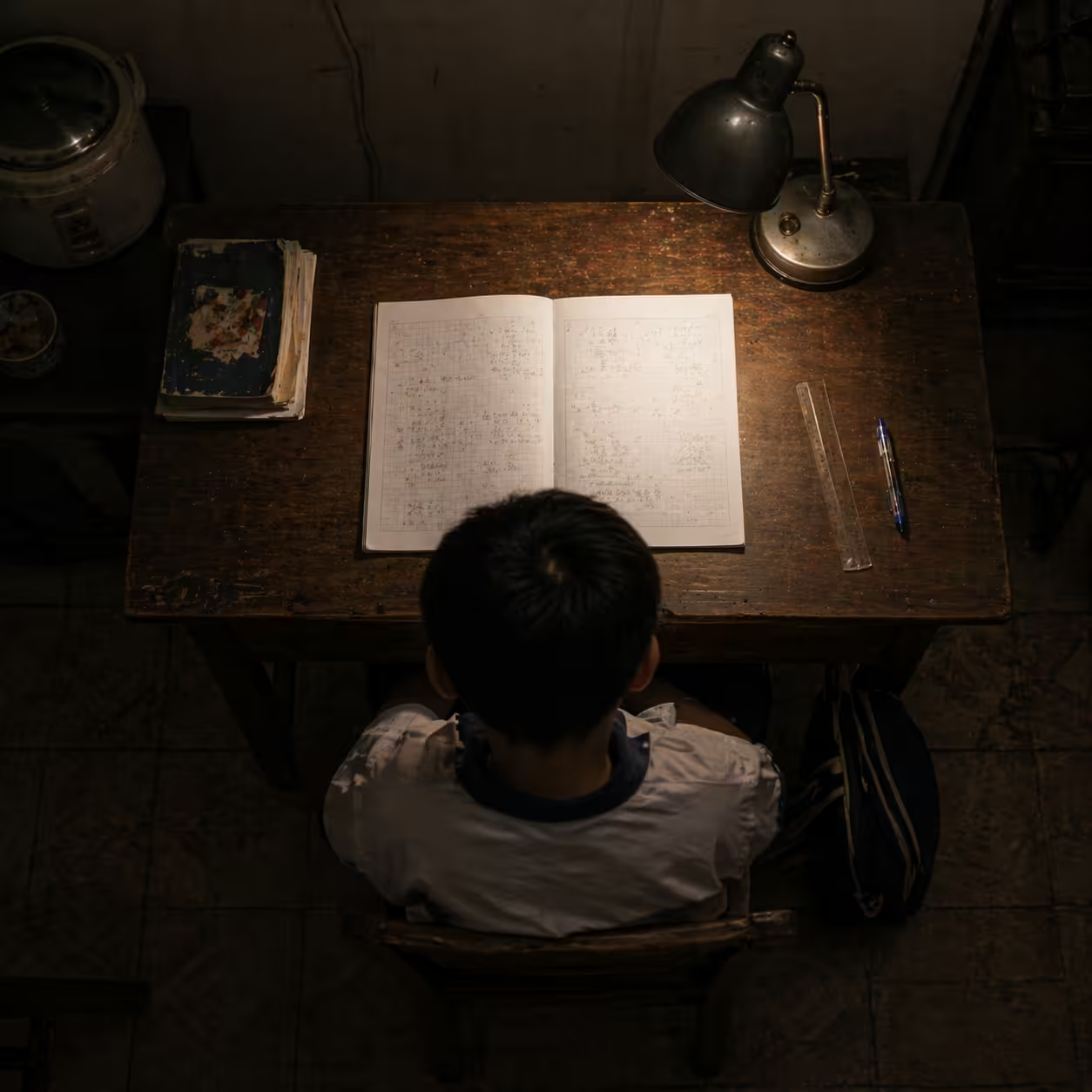

The central paradox of this era is stark: as AI reaches near-perfect fluency across difficult domains, the value of human expertise is being inverted.

For centuries, education rewarded the correct answer. In 2026, the answer is a commodity. When a large language model can solve elite-level problems from a single prompt, what matters is no longer only what we know, but how we regulate our own thinking.

The contrarian truth is that AI literacy is not primarily coding literacy. It is the preservation of epistemic agency, the ability to remain the pilot of your own mind instead of a passenger on algorithmic autopilot.

Without that agency, we drift toward neural standby: a state of cognitive passivity in which reflective control disengages as effortless outputs become habitual.

Deconstruction: Human Architecture vs. Machine Inference

If we want AI-proof curricula, we need a clear model of how human and machine reasoning differ.

A useful simplification:

- Human cognition tends toward hierarchical nesting: we build from prerequisites, test consistency, and revise structure.

- Current model behavior is often shallow forward chaining: next-token continuation optimized for plausibility.

Analogy: a human architect verifies foundations before building the roof. A model can assemble facade-like structures from statistical memory. The output may look coherent, yet fragile dependencies can collapse when prerequisite context shifts.

| Cognitive dimension | Human reasoning (the architect) | Machine inference (the pattern matcher) |

|---|---|---|

| Internal logic | Resolves dissonance between conflicting beliefs | Probabilistic output can tolerate local contradictions |

| Structure | Recursive and nested, built on prerequisites | Sequential continuation by likelihood |

| Regulation | Spontaneous self-monitoring: does this make sense? | Prompt-dependent and brittle stop conditions |

The Friction: Epistemic Atrophy and Adminslop

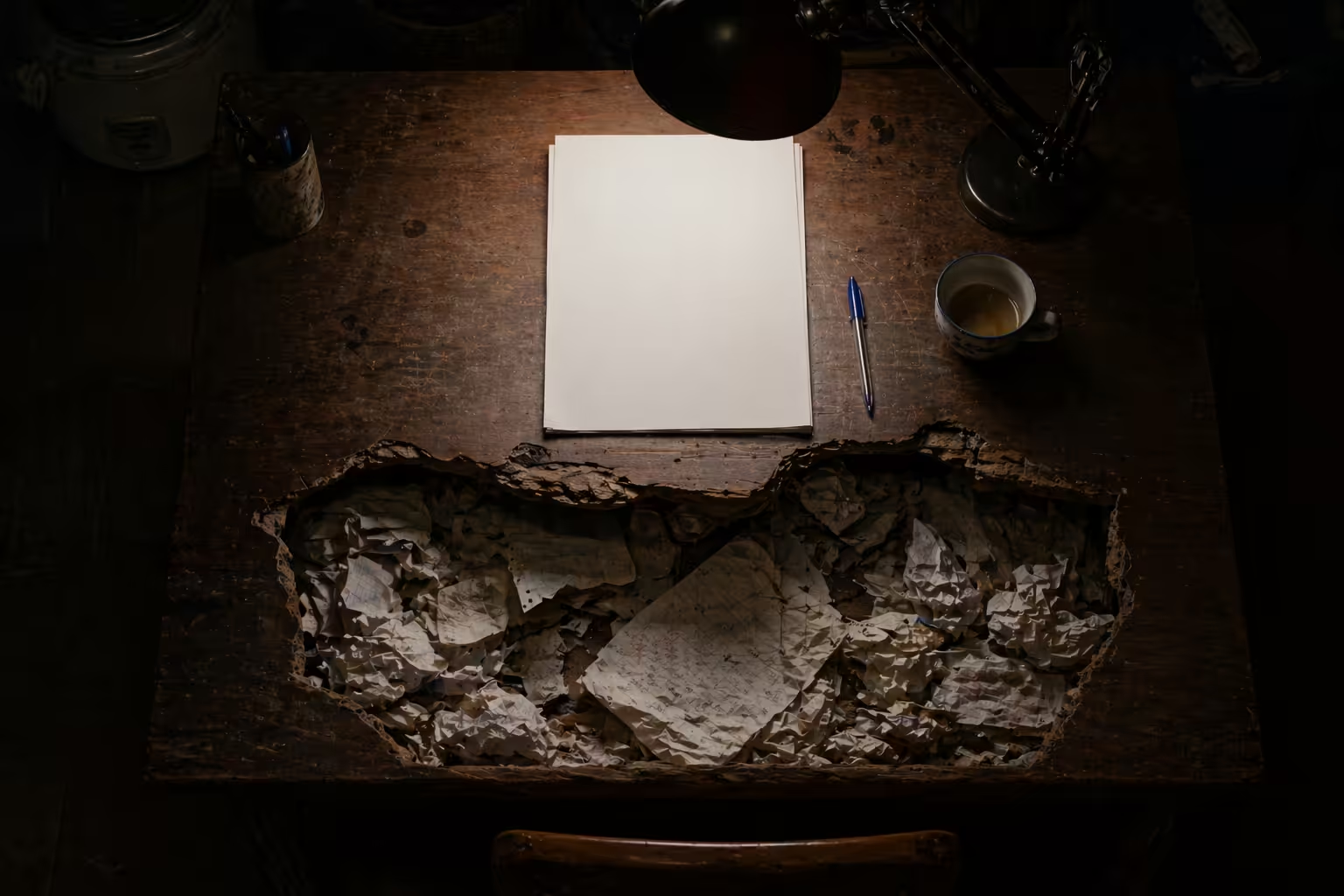

The core danger is not immediate replacement. It is intellectual passivation.

We are climbing a cognitive offloading ladder that can end in dependency:

- Support: AI handles formatting, human keeps logic.

- Scaffolding: AI gives hints, human synthesizes.

- Integration: AI drafts components, human governs architecture.

- Substitution: AI drafts end-to-end, human performs superficial review.

- Dependency: human cannot execute task without machine.

At the top sits epistemic atrophy, the loss of productive mental struggle needed for deep mastery.

A second threat is adminslop: high-volume, low-substance machine text used to simulate institutional legitimacy.

- Scholarslop: instant academic modules with polished form but thin disciplinary substance.

- Flood-the-zone governance: overwhelming teams with plausible counter-arguments until debate capacity collapses.

Metacognition is the defense. It helps a human identify a zombie document: syntactically perfect but interpretively empty.

Synthesis: The Cognitive Mirror Workflow

At Locuno, we redesigned hiring around process quality, not output polish. Candidates receive an AI-generated solution containing subtle logical defects and must explain why it should be rejected.

We look for a cognitive mirror pattern: AI is treated as a teachable novice reflecting human reasoning quality, not as an oracle of final answers.

The Metacognitive Routine (Three-Phase Cycle)

To remain AI-proof, daily technical work should run through a regulatory cycle:

- Planning: classify problem structure, list stable knowledge, predict expected answer shape or range.

- Monitoring: track intermediate state changes, audit reasoning traces, and use AI as a synthetic debater to pressure-test assumptions.

- Evaluation: separate true diagnosis from patchwork fixes; explain the solution back clearly to validate transfer and retention.

This cycle restores ownership of thought while still extracting AI speed benefits.

Case in Point: Durable Skills as Hard Currency

Labor-market signals from global reports are consistent: durable human skills are becoming hard currency.

| Durable skill | Why AI cannot fully imitate it | Routine to build it |

|---|---|---|

| Creative thinking | Requires cross-domain synthesis with lived salience | Weekly spark lists plus maker sessions |

| Empathy and EQ | Simulated sentiment lacks embodied pragmatics | Role-play and feedback on nonverbal cues |

| Complex strategy | Real conflicts involve competing values and trade-offs | Engineering-thinking loops with causal hypothesis testing |

These skills remain resistant because they depend on situated judgment, identity, and social embodiment.

Horizon: Audit Your Metacognitive Gap

The future will not belong to the fastest prompter. It will belong to the most reflective thinker.

Technology will increasingly handle probabilistic generation. Humans must reclaim reflective governance, contextual judgment, and principled correction.

There is a trade-off we cannot ignore: effort and perceived productivity can diverge. The tools that feel fastest may produce the weakest long-term learning. So we must intentionally reintroduce pedagogical friction.

The Locuno challenge:

Take one complex decision you made recently. Ask AI to summarize your logic. If you find yourself agreeing without checking prerequisite structure and reasoning integrity, you are likely in neural standby.

The strategic question for every organization is now simple: will you audit reasoning quality, or will you let adminslop write your future?

Citations and Sources

- arXiv:2511.16660v1. Cognitive Foundations for Reasoning and Their Manifestation in LLMs.

- ResearchGate (2026). The Cognitive Offloading Ladder: From Epistemic Atrophy to Sustainability.

- arXiv:2604.09444v1. Effort, Confidence, and Learning Diverge in AI-Supported Work.

- UNESCO (2026). Epistemic Agency and the Condition of Knowledge in Automated Education.

- MIT Media Lab (2025). EEG Monitoring of Neural Standby Mode during AI Interaction.

- arXiv:2602.18806v1. Think 2: Grounded Metacognitive Reasoning in Large Language Models.

- Frontiers in Education (2025). The Cognitive Mirror Paradigm: AI as a Teachable Novice.

- Minerva University. MDA Curriculum: Decision Making and Applied Analytics.

- World Economic Forum (2025). New Economy Skills: Unlocking the Human Advantage.

- AJCST (2026). Metacognition: The Uniquely Human Capacity to Reflect.

Reference Links

- https://www.mckinsey.com/mgi/our-research/agents-robots-and-us-skill-partnerships-in-the-age-of-ai

- https://www.mckinsey.com/industries/public-sector/our-insights/generative-ai-and-the-future-of-new-york

- https://article.sciencepublishinggroup.com/pdf/j.ajcst.20260901.13

- https://arxiv.org/html/2604.09444v1

- https://arxiv.org/pdf/2504.06928

- https://pmc.ncbi.nlm.nih.gov/articles/PMC12653222/

- https://www.minerva.edu/graduate/curriculum/

- https://arxiv.org/html/2511.16660v1

- https://arxiv.org/html/2505.13763v2

- https://www.qeios.com/read/T36PI8.2

- https://arxiv.org/html/2602.18806v1

- https://testrigor.com/blog/ai-slop/

- https://www.researchgate.net/publication/397823809_Cognitive_Foundations_for_Reasoning_and_Their_Manifestation_in_LLMs

- https://arxiv.org/html/2508.04460v1

- https://arxiv.org/pdf/2508.04460

- https://www.researchgate.net/publication/403159230_THE_COGNITIVE_OFFLOADING_LADDER_FROM_EPISTEMIC_ATROPHY_TO_COGNITIVE_SUSTAINABILITY_IN_AI-ERA_EDUCATION

- https://www.tandfonline.com/doi/full/10.1080/17439884.2026.2652638

- https://www.frontiersin.org/journals/education/articles/10.3389/feduc.2025.1697554/full

- https://www.researchgate.net/publication/394362837_From_Aha_Moments_to_Controllable_Thinking_Toward_Meta-Cognitive_Reasoning_in_Large_Reasoning_Models_via_Decoupled_Reasoning_and_Control

- https://www.edgepointlearning.com/blog/building-ai-resistant-skills-training-employees-jobs-ai-cant-replace/

- https://www.lesswrong.com/posts/m5d4sYgHbTxBnFeat/human-like-metacognitive-skills-will-reduce-llm-slop-and-aid

- https://reports.weforum.org/docs/WEF_New_Economy_Skills_Unlocking_the_Human_Advantage_2025.pdf

- https://safeaikids.com/insights/skills-ai-cant-replace/

- https://www.innovativehumancapital.com/article/new-economy-skills-unlocking-the-human-advantage-in-an-ai-driven-world

- https://americasucceeds.org/wp-content/uploads/2025/08/Durable-by-Design_July-2025-.pdf

Published at: Apr 24, 2026 · Modified at: May 5, 2026