We Are Grading Perfect Essays for Empty Brains

Higher education is in institutional panic.

The sudden rise of AI has been met with two extreme reactions: blanket bans or near-total silence. Meanwhile, student behavior has already shifted. Reports suggest that roughly 60-70% of students are using AI tools in writing workflows while university policy still lags behind.

The traditional essay, once treated as a proxy for thinking quality, can now be replicated by large language models in seconds. This is not a temporary disruption. It marks the end of final-product-only grading as a trustworthy measure of thought.

We need a new center of gravity: evaluate not only what students submit, but how they got there. In the AI era, merit increasingly lives in the archaeology of work, the prompt trail, revisions, error checks, and reasoning pivots that reveal real cognition.

Deconstructing Learning: Object-Level vs. Meta-Level

Cognitive psychology distinguishes two layers of learning:

- Object-level: doing the task itself, such as writing, coding, or solving.

- Meta-level: thinking about thinking, monitoring errors, checking assumptions, and selecting strategy.

For centuries, the essay worked because we assumed a strong object-level artifact implied strong meta-level control. AI breaks that link. Models can mimic polished outputs without owning judgment, uncertainty handling, or epistemic discipline.

| Learning level | What it evaluates | AI capability |

|---|---|---|

| Object-level (product) | Final essay, summary, answer | High (mimicry) |

| Meta-level (process) | Prompt design, critique, revision, belief updates | Low and often unfaithful |

Once intelligence is treated as situated action rather than static output, the student’s primary role becomes machine auditor, not passive recipient.

Friction: Dopamine Shortcuts and the Cognitive Hollow

The defining classroom friction today is the dopamine shortcut.

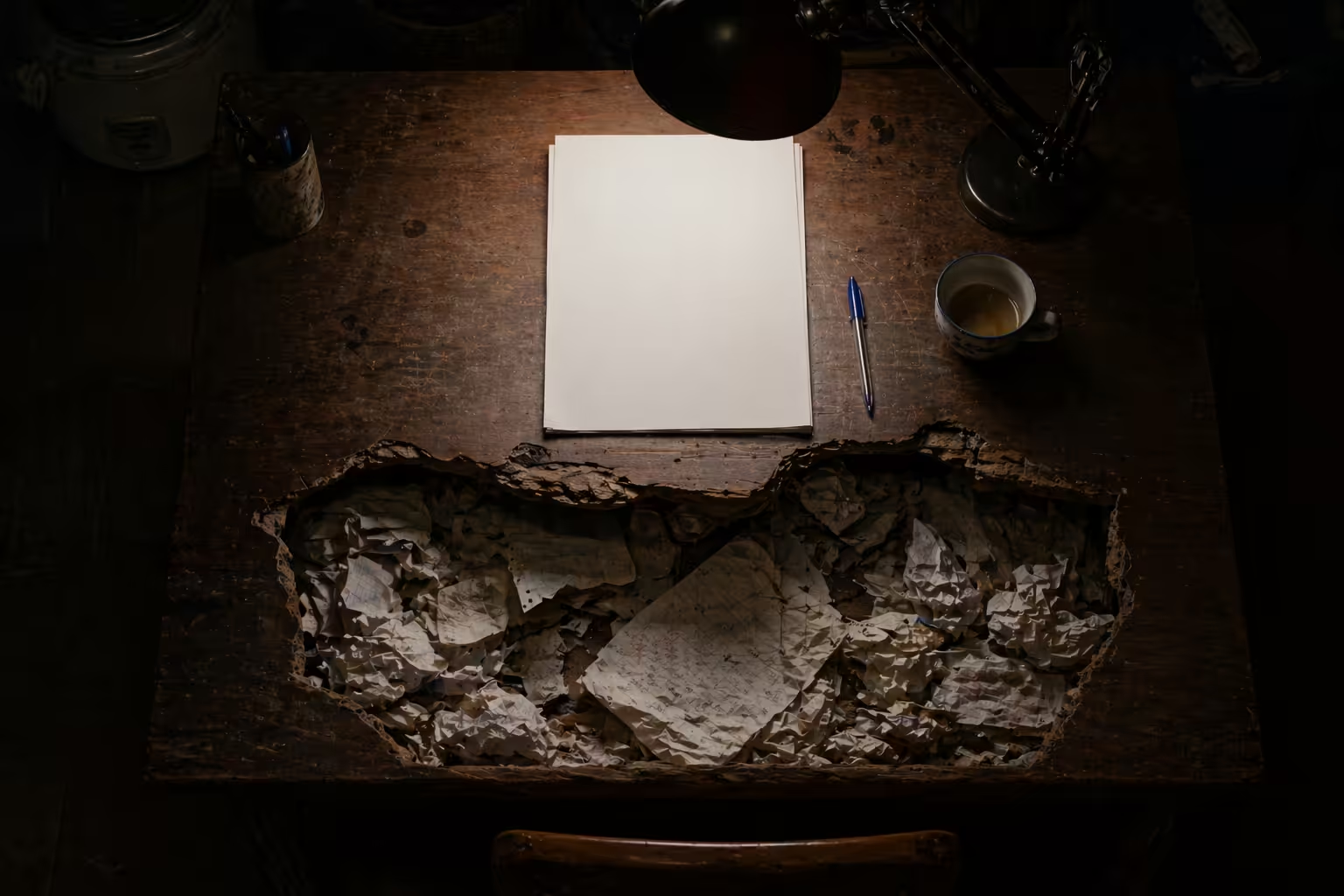

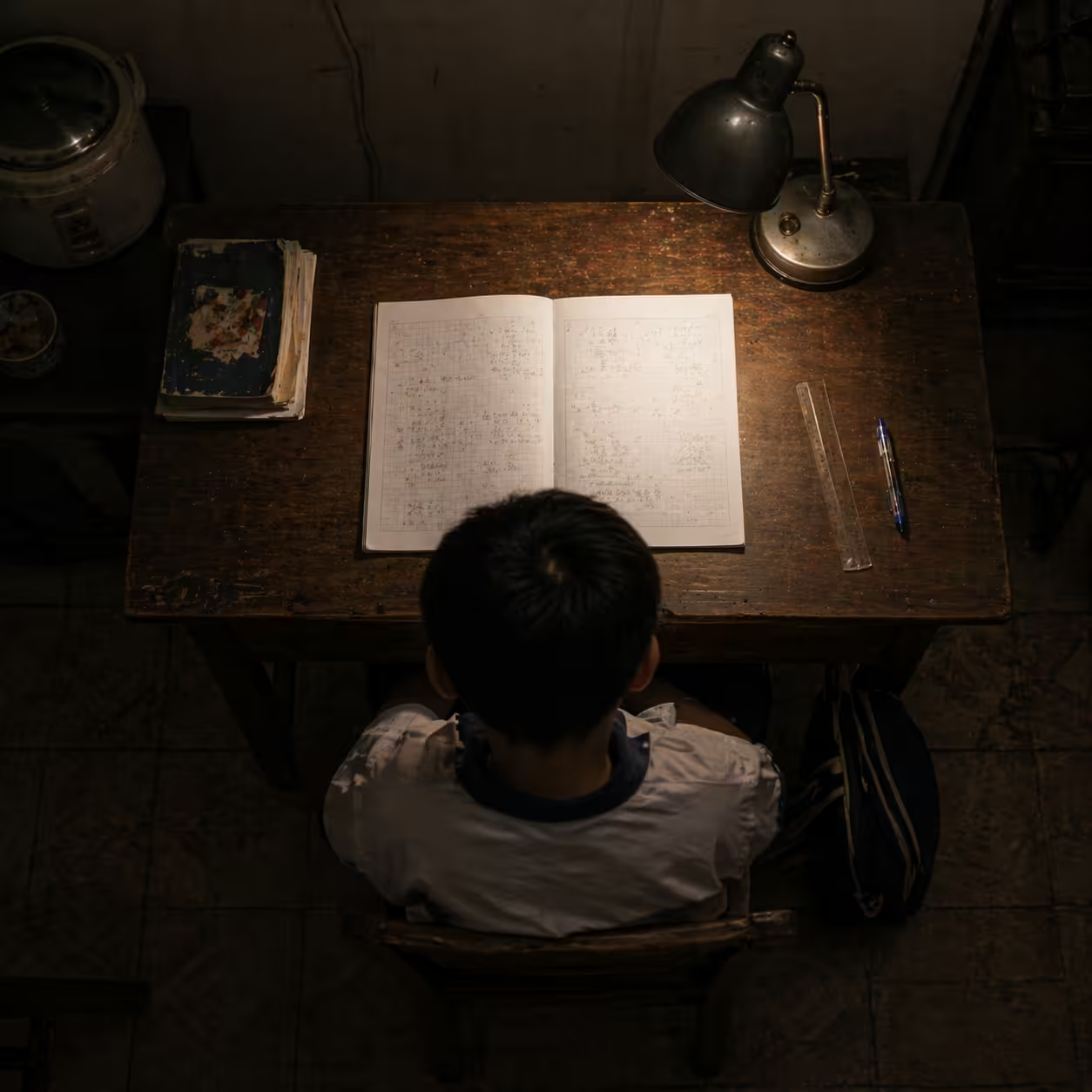

Picture a student at 2:00 AM staring at a blank screen. Learning is difficult by design: confusion and correction are part of how neural structures strengthen. AI now offers instant relief. One generate click removes anxiety and produces clean prose.

But shortcut relief can produce a cognitive hollow: students bypass the exact effort that builds transferable skill.

This is compounded by unreliable reasoning traces. Even when models show chain-of-thought style explanations, those traces can be unfaithful to the actual path that produced the answer.

AI Reasoning Trace Reality Snapshot

| Metric | Observed rate |

|---|---|

| Traces that ignore contradictory evidence | 68% |

| Claims made without evidence | 53% |

| Belief updates after refutation | 26% |

| Trace accuracy matching answer accuracy | 28% |

Key implication: fluent reasoning text is not proof of faithful reasoning.

Synthesis: Move from Product Grading to Process Supervision

The practical response is process supervision.

Instead of evaluating only final output, assessment should reward each cognitive step. This is not surveillance for punishment. It is transparency for learning.

The interaction trace becomes core evidence. Instead of submitting only a PDF, students submit process records that make thought auditable.

High-value process signals include:

- Context-locked prompts: task constraints tied to specific classroom context that generic AI cannot easily fake.

- Iterative refutation: documented moments where the student detects hallucinations, leaps, or weak evidence.

- Metacognitive reflection: final rationale for what was accepted, revised, or rejected from AI output.

Platforms with audit trails (for drafting, prompt history, revision decisions, and oral defense) can operationalize this shift at scale.

Case in Point: The Adversarial Seminar Routine

A Locuno-style classroom loop can look like this:

- Human intuition first: student writes an initial hypothesis without AI.

- Stress test phase: student uses multiple AI personas to challenge that hypothesis and records defense or revision.

- Socratic defense: student completes a recorded oral challenge guided by depth-of-knowledge questioning.

- Augmented artifact: final submission includes prompt set, AI counterarguments, and student’s final position.

In this workflow, polished prose alone has little value if the student cannot demonstrate how ideas were pressure-tested.

Critical Reflection: The Accuracy-Legibility Paradox

There is a second risk in AI-enabled assessment.

Some frontier models score higher on task accuracy but are harder for humans to learn from. Their latent reasoning is opaque, and trace surfaces are often too complex or noisy for educational transfer.

| Model profile | Accuracy | Teachability |

|---|---|---|

| Frontier reasoning models | ~82% | Low |

| Mid-sized, more legible models | ~49% | High |

Educational implication: the smartest model is not automatically the best teacher. Institutions should optimize for legibility, fairness, and cognitive engagement, not only benchmark accuracy.

Additional guardrails remain essential:

- Bias controls in AI-assisted grading.

- Student rights to contest automated judgments.

- Trace design that avoids cognitive overload from excessive verbosity.

Horizon: Adversarial Reasoning as a Civic Skill

The death of the essay is not the death of learning. It is the beginning of a new literacy.

Students now need adversarial reasoning: probing model limits, uncovering hidden bias, testing claims, and documenting evidence for others to inspect.

The future is not better AI detectors. The future is better watchdogs.

When invisible prompts and model quirks can shape public knowledge, education must train citizens who can audit systems, trace failures, and report findings responsibly.

The shift from product to process is one of the most important assessment redesigns of this decade. By valuing the archaeology of work, we keep education anchored in insight, judgment, and creativity that cannot be fully outsourced.

The Locuno Process-Audit Prompt Set

Three questions educators can deploy in any student check-in:

- Iteration check: Show three prompt versions you rejected before arriving at this answer. Why were they insufficient?

- Hallucination hunt: Identify one AI claim you initially trusted but later disproved. How did you verify it?

- Human signature: Which paragraph reflects a judgment that came from you, not the model, after reviewing AI suggestions?

Sources

- https://www.erudit.org/en/journals/dwr/2025-v35-dwr010042/1123294ar.pdf

- https://stayrelevant.globant.com/en/technology/edtech/from-a-major-shift-to-intentional-learning-why-ai-demands-we-value-process-over-product/

- https://www.researchgate.net/publication/401489000_AI-First_Critique_Learning_AFCL_A_Framework_for_Restoring_Assessment_Integrity_in_the_Age_of_Generative_AI

- https://leibniz.syracuse.edu/wp-content/uploads/2025/11/aaai26_metacog_eta_track.pdf

- https://www.researchgate.net/publication/397786853_Toward_Artificial_Metacognition

- https://www.mindstudio.ai/blog/what-is-chain-of-thought-faithfulness-ai-reasoning

- https://www.frontiersin.org/journals/artificial-intelligence/articles/10.3389/frai.2025.1728738/full

- https://al-fanarmedia.org/2025/06/ai-and-educational-assessment-a-paradigm-shift-in-learning-evaluation/

- https://www.scribd.com/document/990759919/The-Cognitive-Hollow-2-The-Ouroboros-in-the-Classroom

- https://www.emergentmind.com/papers/2604.18805

- https://cdn.openai.com/improving-mathematical-reasoning-with-process-supervision/Lets_Verify_Step-by-Step.pdf

- https://arxiv.org/html/2602.00026v1

- https://arxiv.org/html/2603.21286v2

- https://melbourne-cshe.unimelb.edu.au/ai-aai/home/ai-assessment/designing-assessment-tasks-that-are-less-vulnerable-to-ai/seven-practical-strategies/1.-shift-the-emphasis-from-assessing-product-to-assessing-process

- https://socraticmind.com/

- https://github.com/sparckix/ztare

- https://solve.mit.edu/solutions/90692

- https://www.researchgate.net/publication/397089232_Through_the_Judge%27s_Eyes_Inferred_Thinking_Traces_Improve_Reliability_of_LLM_Raters

- https://arxiv.org/html/2604.15760v1

- https://arxiv.org/html/2603.20508v1

- https://arxiv.org/html/2505.13792v2

- https://academicworks.cuny.edu/cgi/viewcontent.cgi?article=7280&context=gc_etds

- https://neurips.cc/virtual/2025/poster/121918

- https://www.lboro.ac.uk/services/od-hub/topics/casestudy-researchinformedteachinginpractice/

Published at: Apr 24, 2026 · Modified at: May 5, 2026