Post-LLM Architecture: The Rise of Neural-Symbolic AI

The generative-AI revolution has reached a paradoxical impasse. After remarkable breakthroughs, large language models are now straining credibility in uncontrolled production settings. Reported error ranges of 20 to 50 percent are no longer treated as edge cases in high-noise environments. In regulated domains such as healthcare, law, and finance, one hallucinated answer can trigger fines, liability, or direct harm.

The trust signal reflects this friction. A growing share of leaders and end users now question whether fluent output is reliable output. The core reason is architectural: today’s LLMs optimize next-token likelihood, not grounded truth or formal consistency. They are exceptional statistical predictors, but they do not natively maintain an explicit model of facts, constraints, or proof obligations.

Scaling alone is unlikely to close that gap. The emerging direction is post-LLM architecture: combine deep neural learning with symbolic reasoning so systems can explain decisions, validate constraints, and abstain when evidence is insufficient.

Deconstruction: Learning vs. Logic

At first principles, neural and symbolic AI optimize different capabilities.

Neural systems learn from large unstructured corpora. They excel at perception-like tasks, language fluency, pattern abstraction, and flexible generalization. But they do not inherently execute deterministic rule-following.

Symbolic systems represent concepts as explicit structures such as rules, graphs, predicates, and constraints. They excel at deterministic inference, traceability, and consistency checks. But they are brittle with noisy inputs and expensive to maintain when domains evolve rapidly.

Neuro-symbolic AI reframes this as complementarity:

- Neural side: learns from raw data and handles ambiguity.

- Symbolic side: enforces logic, consistency, and auditable reasoning.

The resulting architecture resembles fast and slow cognition: intuitive generation followed by deliberative verification.

Friction: Hallucination and Overconfidence

Hallucination is not just a UX issue. It is a structural consequence of objectives that reward likely continuation over verified truth.

A pure LLM can produce fluent statements without any mechanism that asks: is this derivable from trusted knowledge, valid under constraints, or internally consistent across steps?

This creates a trust asymmetry:

- The model may be uncertain internally.

- The response style appears confident externally.

In high-stakes workflows, this is unacceptable. Systems must support calibrated uncertainty and refusal when evidence is missing. A symbolic verification layer can provide that missing behavior by refusing unsupported conclusions rather than confabulating plausible text.

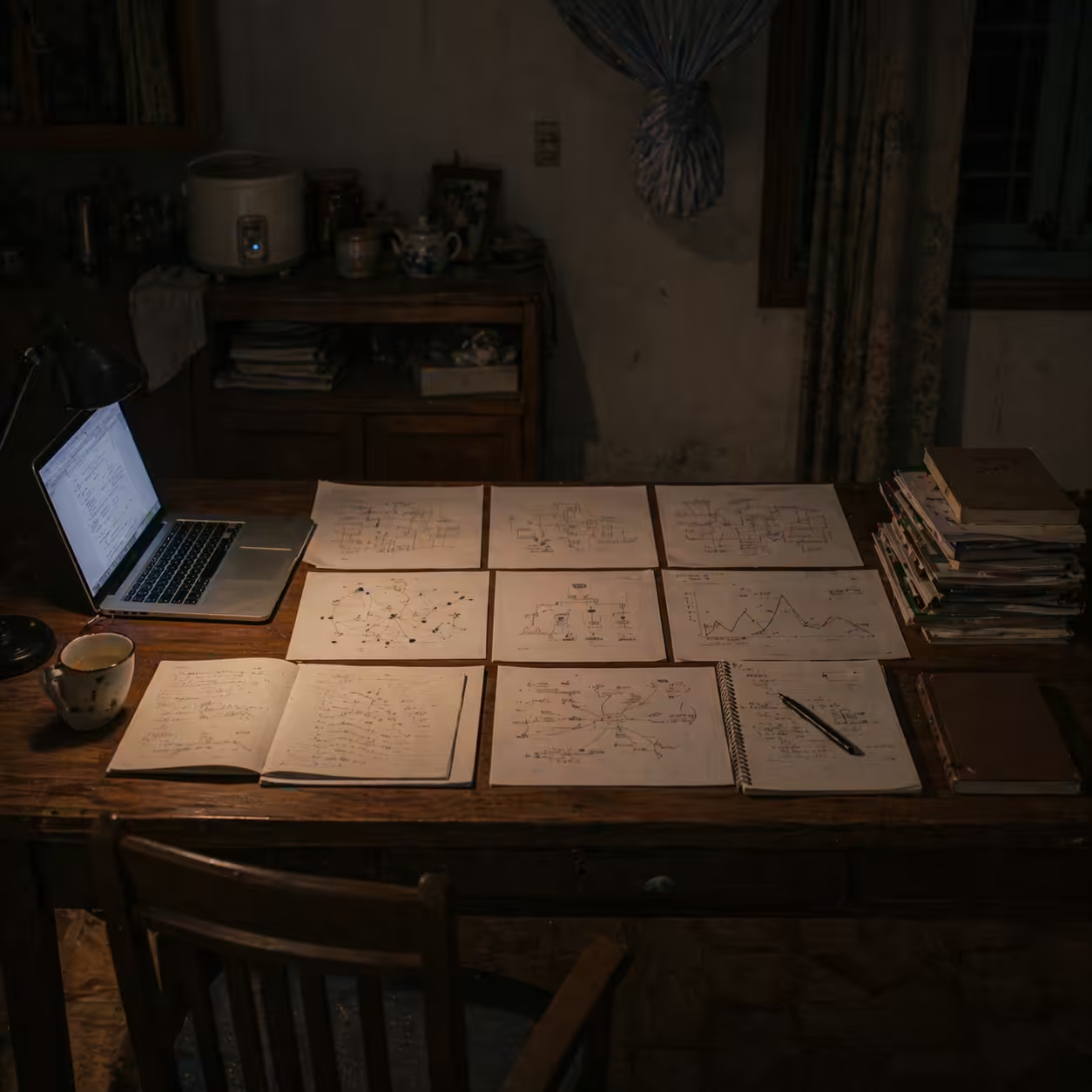

Synthesis: Human-Centered Hybrid Reasoning

The synthesis is not one architecture but a family of integration patterns.

1) Neural-first pipelines

A neural model proposes candidates, then symbolic modules verify or reject each step.

Example pattern:

- LLM generates hypotheses, plans, or proof steps.

- Symbolic solver checks constraints and logical validity.

- Failing branches are pruned; only valid branches continue.

This pattern mirrors systems such as neural proposal plus formal prover loops in mathematics and theorem-style tasks.

2) Symbolic-first pipelines

The system begins with structured domain knowledge and uses neural components to parse unstructured inputs.

Example pattern:

- Clinical, legal, or policy rules define the valid decision space.

- LLM extracts entities and candidate facts from free text.

- Rule engine validates compliance and consistency before output.

This prevents final answers from violating known constraints.

3) Tight hybrids

Neural and symbolic mechanisms are coupled at training or inference time.

Examples include:

- Logic-regularized objectives.

- Differentiable reasoning modules.

- Program-like constrained decoding.

The goal is to preserve neural flexibility while reducing logical drift.

Case in Point: Hybrid Contract Reasoning Workflow

A practical workflow for complex contract analysis can look like this:

- Neural extraction: a transformer reads clauses and outputs structured candidate facts.

- Symbolic validation: a rule engine checks obligations, conflicts, approval dependencies, and temporal consistency.

- Uncertainty handling: when constraints are underspecified, the system emits an explicit uncertainty flag instead of guessing.

- Human arbitration: legal reviewers resolve flagged ambiguity.

- Natural-language synthesis: verified conclusions are rendered into plain language with trace references.

This design turns AI from answer generator into reasoning assistant. The human remains accountable, while the system increases speed, coverage, and audit quality.

Critical Reflection: Trade-offs

Neuro-symbolic systems are not free wins. They introduce real engineering and organizational costs.

Technical costs

- Knowledge engineering overhead for rules and ontologies.

- Symbol grounding challenges between natural text and formal schemas.

- Higher latency from verification loops.

- Complexity in debugging across heterogeneous components.

Organizational costs

- Need for cross-functional talent: ML plus formal methods.

- Governance ownership for rule quality and update cycles.

- Clear liability boundaries for abstentions and escalations.

Strategic upside

- Better auditability and compliance posture.

- Stronger out-of-distribution robustness in rule-heavy domains.

- Higher trust through explicit uncertainty handling.

For regulated and mission-critical systems, that trade can be favorable even when throughput is lower than pure neural generation.

Horizon: From Black-Box Fluency to Accountable Reasoning

The next AI wave will likely be judged less by stylistic fluency and more by decision accountability.

Post-LLM architecture shifts the optimization target:

- From maximal token prediction to institutional fitness.

- From one-shot generation to validated reasoning loops.

- From opaque confidence to inspectable evidence trails.

Teams that invest now in neural-symbolic design patterns, tooling, and governance will be better positioned for high-trust automation.

The frontier is no longer just bigger models. It is better reasoning architecture.

References

- Nawaz, U. et al. A review of neuro-symbolic AI integrating reasoning and learning for advanced cognitive systems. Intelligent Systems with Applications 26, 200541 (2025).

- Samuel, A. Neurosymbolic Integration Approaches for Hybrid Reasoning in Modern AI Systems. International Journal of Artificial Intelligence and Machine Learning Research and Development 6(2), 13-18 (2025).

- Cao, S., Yao, Z., Hou, L. and Li, J. A General Neural-Symbolic Architecture for Knowledge-Intensive Complex Question Answering. Neurosymbolic AI (IOS Press, 2025).

- Rani, M., Mishra, B. and Thakker, D. Advancing Symbolic Integration in Large Language Models: Beyond Conventional Neurosymbolic AI. arXiv:2510.21425 (2025).

- Prenosil, G. A. et al. Neuro-symbolic AI for auditable cognitive information extraction from medical reports. Communications Medicine 5, 491 (2025).

- Trinh, T. H. et al. Solving Olympiad Geometry without human demonstrations. Nature 625, 476-482 (2024).

- IBM Research. Neuro-symbolic AI. https://www.research.ibm.com/topics/neuro-symbolic-ai

- Neurosymbolic AI: The Hybrid That Could Finally Fix the Hallucination Problem. NerdZap, April 2026.

Published at: Apr 23, 2026 · Modified at: May 5, 2026

Related Posts

Digital Minimalism at Work: How to Protect Your Focus in the Age of AI Noise

In the Age of AI Slop, Curation Is the New Superpower