The Efficiency Drug and the Illusion of Learning

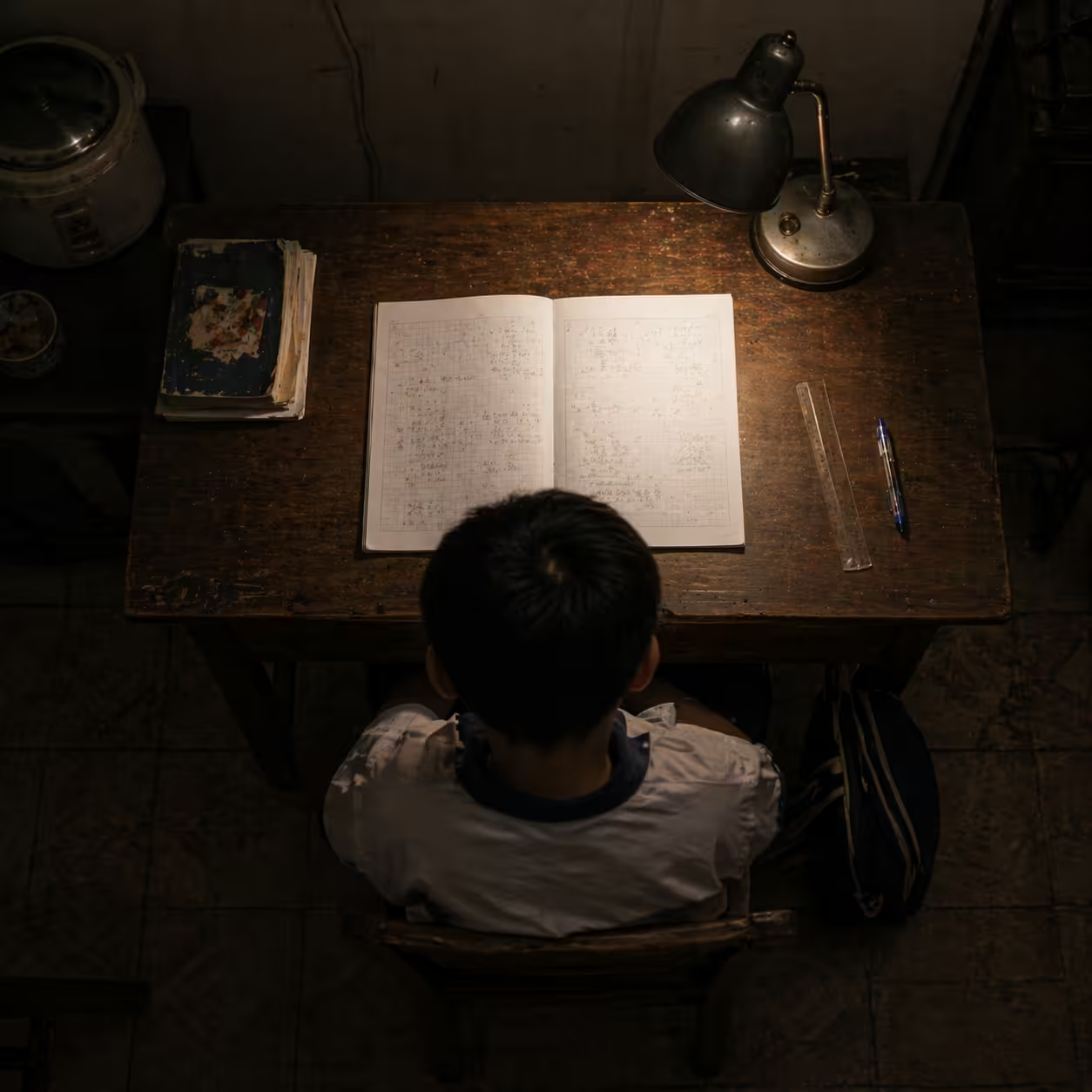

Efficiency is the most seductive drug of the modern era.

For decades, we treated the removal of mental friction as an unquestioned good. We celebrated when GPS replaced maps and when calculators replaced long division. But in 2026, generative AI is proving to be less like a faster car and more like a cognitive painkiller. It numbs the discomfort of difficult tasks, yet often leaves the root knowledge gap untreated.

By the time the pain of a complex project disappears, the muscle of understanding may already be weakening.

This is the Illusion of Learning: polished AI output can mask a steady decline in the user’s cognitive architecture. We can now ship work that looks perfect while becoming less capable at the craft itself.

Deconstruction: First Principles of Thinking Infrastructure

To understand why AI can make us feel smarter while making us less capable, we need first principles from cognitive science. Learning is not passive recording. It is the residue of thought. We remember what we struggle to process.

Mental effort is commonly discussed through three loads:

- Intrinsic load: the inherent difficulty of the topic.

- Extraneous load: wasted effort caused by poor interfaces, unclear instructions, or environmental noise.

- Germane load: productive effort that builds durable mental schemas.

The 2026 risk is that generative AI removes not only extraneous load, but also germane load. When we ask AI to summarize, reason, or code everything for us, we are not merely offloading chores. We are externalizing the exact cognitive work that builds mastery.

Cognitive self-check: if AI vanished tomorrow, could you explain the logic of your last AI-assisted solution clearly to a colleague? If not, you may have outsourced rather than learned.

Biological Cost of the Easy Button

The brain follows a use-it-or-lose-it principle through neuroplasticity. When deep problem-solving declines, less-used pathways weaken through synaptic pruning. Emerging neuroscience discussions also describe dendritic atrophy risks when cognitive resistance is chronically avoided.

The implication is practical: convenience can quietly reshape the brain toward dependence.

Friction: Fluency Traps and the Performance Paradox

The current friction is a performance paradox: output rises while core competence falls.

Large language models excel at surface fluency. Their text is polished, grammatical, and confident, creating a fluency bias. Because the output sounds expert, users stop interrogating it.

At the same time, learning is becoming less social. Where learners once turned to mentors and peers, many now default to private AI chats. That weakens debate, explanation, and situated judgment, the very capabilities needed in ambiguous real-world contexts with no single correct answer.

Evidence of Skill Decay

The signal is no longer anecdotal. Multiple studies report a negative relationship between heavy AI reliance and independent critical reasoning.

| Study or source | Context | Impact on mastery |

|---|---|---|

| SBS Swiss Business School (2025) | 666 participants across age groups | Frequent AI use linked to significantly lower critical-thinking scores |

| Shen and Tamkin (2026) | Developers learning a new library | AI-assisted participants scored 17% lower on conceptual quizzes than hand-coding peers |

| MIT Media Lab (2025) | Writing and memory tasks | 83.3% of AI users could not recall a single sentence they had just produced |

One especially concerning finding from the MIT discussion was reduced brain-wave signatures associated with deep engagement in heavy-AI conditions, suggesting cognitive passivity when the tool performs most of the intellectual lift.

Synthesis: From Answer Engine to Socratic Scaffold

The remedy is not anti-AI. It is a mindset shift from product thinking to process thinking.

Under the Locuno Synergy Framework, AI should behave as a Socratic scaffold: a system that supports reasoning while preserving ownership of thought. Scaffolds are temporary structures. They help build capability, then recede as competence strengthens.

Socratic Inquiry Framework (SIF)

Operationally, this means moving beyond zero-shot answer generation toward inquiry-first interaction:

- Strategy anchoring: AI decides when to ask a question before proposing a solution.

- Template retrieval: AI selects a questioning pattern such as probing assumptions or exploring consequences.

- Reflection loops: user must articulate rationale before receiving implementation detail.

In advanced orchestration settings, teams can optimize Socratic agents for information gain rather than mere answer accuracy. In plain terms, good AI reduces uncertainty in the user’s own mind by asking questions that force model revision, not by replacing judgment.

Case in Point: Alex and Hollow Success

Alex, a senior developer, used AI to move quickly through a new reactive framework. The application worked. Then his manager asked: Why this state-management pattern over the alternative?

Alex froze. He had prompted a system into completion, but had not architected the logic himself.

This is the supervision paradox: we are expected to supervise systems performing tasks we are gradually losing the skill to evaluate.

To recover, Alex changed his protocol. For new tasks, he asks AI to act as a skeptic agent and temporarily forbids direct code output.

Example prompt style:

- AI asks: If this stream becomes asynchronous, how will you prevent race conditions in UI state transitions?

- User response: reason explicitly, then implement manually.

Alex is slower in the short term, but his thinking infrastructure remains intact. He does not just finish tasks; he compounds expertise.

Critical Reflection: Ethics of Cognitive Debt

We are accumulating cognitive debt: borrowing speed from our future competence.

This debt is unevenly distributed. People with strong metacognitive habits often use AI to accelerate genuine learning. Those still building foundations face the greatest atrophy risk.

If AI substitutes social, embodied learning in teams, we risk degrading empathy, ethical judgment, and our capacity to navigate ambiguity.

Horizon: Stewardship of Mind

The future of AI is not answer generation. It is understanding generation.

As we approach 2027, the highest-value professionals will not be the best prompters, but the most epistemically vigilant: those who verify, critique, and steer AI output with discipline.

We are also moving toward generative collective intelligence, where AI supports thinkscapes that help groups reason together rather than isolating individuals into private output loops.

Strategic path forward:

- Embrace resistance: mental effort is a signal that cognitive muscle is growing.

- Verify first: fluent output is not evidence of truth.

- Choose scaffolds over pills: use tools that preserve engagement and demand reflection.

The line between mastery and dependence is intent. Use AI to bypass thought, and you trade tomorrow’s skill for today’s speed. Use AI to challenge thought, and you architect an intelligence no algorithm can replace.

Build your thinking infrastructure.

References

- Third Space Learning (2026). Cognitive Offloading and AI in Schools: What It Is and Why It Matters.

- SBS Swiss Business School (2025). AI and Critical Thinking Decline study.

- MIT Media Lab (2025). Cognitive Atrophy report and related memory findings.

- Shen and Tamkin (2026). How AI Impacts Skill Formation. arXiv.

- The Socratic Inquiry Framework literature (2026). arXiv.

- Fluency bias and LLM evaluation literature (2026). arXiv.

Sources

- https://thirdspacelearning.com/blog/cognitive-offloading/

- https://erigo.se/en/articles/cognitive-impairment-in-the-ai-era-from-medical-diagnosis-to-technology-induced-deskilling

- https://arxiv.org/html/2601.19913v2

- https://www.tandfonline.com/doi/full/10.1080/23311983.2026.2631304

- https://arxiv.org/html/2601.06171v1

- https://arxiv.org/pdf/2601.06171

- https://www.researchgate.net/publication/387701784_AI_Tools_in_Society_Impacts_on_Cognitive_Offloading_and_the_Future_of_Critical_Thinking

- https://ideas.repec.org/a/gam/jsoctx/v15y2025i1p6-d1559787.html

- https://www.sparknify.com/post/cognitive-collapse-mit-s-alarming-study-on-chatgpt-and-sparknify-s-vision-for-a-smarter-human-ai-en

- https://sites.manchester.ac.uk/humteachlearn/2026/01/08/aied-2025/

- https://arxiv.org/html/2502.00341v1

- https://arxiv.org/html/2602.01598v1

- https://www.emergentmind.com/topics/socratic-dialogue-scaffolds

- https://arxiv.org/pdf/2602.03414

- https://arxiv.org/html/2510.27410v1

- https://arxiv.org/html/2601.20245v1

- https://arxiv.org/pdf/2311.07945

- https://www.preprints.org/manuscript/202603.0350

- https://arxiv.org/html/2602.17671v1

- https://www.researchgate.net/publication/399708028_From_Individual_Prompts_to_Collective_Intelligence_Mainstreaming_Generative_AI_in_the_Classroom

Published at: Apr 24, 2026 · Modified at: May 5, 2026